Feedback on Books

Feedback on Generative Books

For Assignment #6, “Generative Book”, students were given two weeks to write code to generate a book. The students were required to generate the content of the book, as well as to execute or generate its layout automatically through code. Lastly, students were expected to present their book in a printed, bound format. The reviewers for this project were Nick Montfort, Katie Rose Pipkin, Kyuha Shim, and Golan Levin (the professor of this course). Feedback from these reviewers is compiled below.

Nick Montfort develops computational art and poetry, often collaboratively. Montfort has helped to establish several new academic fields and approaches: Platform studies, critical code studies, and electronic literature. Montfort is a professor of digital literature at MIT, where he directs The Trope Tank, a DIY and boundary-transgressing research lab that undertakes scholarly and aesthetic projects and offers material computing resources.

Katie Rose Pipkin is a curator and multidisciplinary artist native to Austin, Texas. Pipkin works in language, with code and on paper. Pipkin makes drawings, as well as generative books and other computational artworks. Best known for creating Twitterbots, as well as generative poetry and other software, including games, Pipkin is presently a 2nd-year MFA student in the CMU School of Art.

Kyuha (Q) Shim is a designer, researcher and Assistant Professor in the School of Design at Carnegie Mellon University. Prior to coming to CMU, he held faculty positions at the Rhode Island School of Design and the Royal College of Art. He has worked as a researcher at the Jan van Eyck Academie and as a research fellow (Data Visualization Specialist) at MIT’s SENSEable City Laboratory.

Golan Levin, the director of this course, is an Associate Professor of Electronic Art at CMU, with courtesy appointments in Design, Architecture, Entertainment Technology, and Computer Science.

aliot

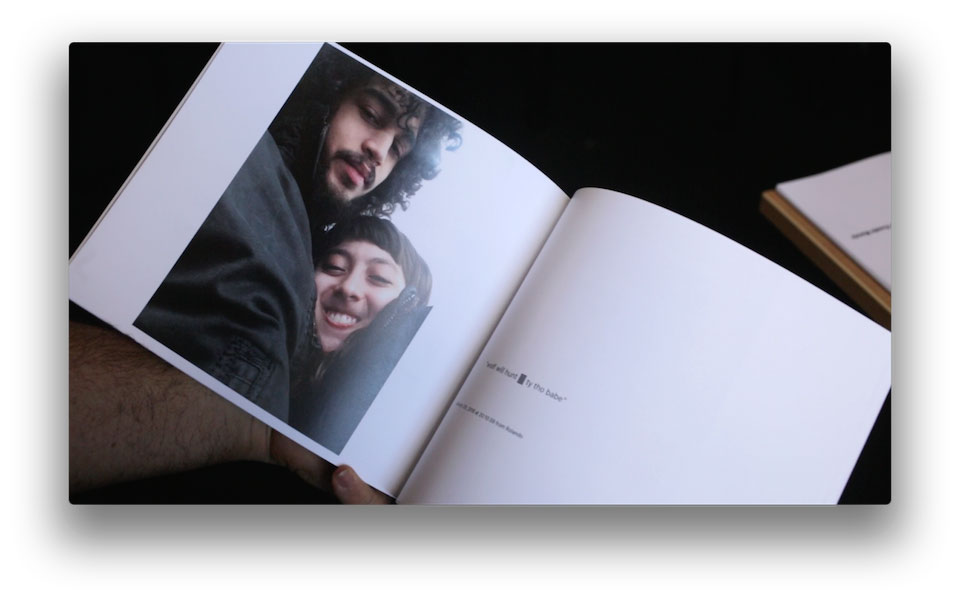

Nick Montfort: The word/image connection is good in this project. It seems a stretch to call this a computer-generated book, even if code was used to put it together. But since it was, and a book was made, for this assignment it seems all right. The book includes some photos and corresponding texts that were gathered, and could have been done in the 1960s with Polaroid photos as “What I Was Doing When You Called.” Also see long-term photo projects by Teching Hsieh and Alberto Frigo. The book is about the everyday and the ordinary, but does it provide any insight into that, even for the author, who finds the results “unsurprising” and does not mention learning anything?

Katie Rose Pipkin: Congrats- I think this is a successful project. The conceit and the scope are just right; it feels personal without being too navel-gazy to become uninteresting. I think if you can continue working in similarly clever ways (with small personal datasets, manageable scope, etc) you’ll be very successful in generative projects.

Kyuha Shim: Nice remix of text messages, images, emails and screen captures of social media app. Unfortunately, the repetitive layout could get boring.

Golan Levin: I can’t decide if this is conceptually profound, or profoundly self-absorbed. Maybe it’s a bit of both. That’s not a bad thing: it’s okay to make a book just for yourself. The algorithmic work is assuredly quite competent. One complaint: The graphic design feels arbitrary and unconsidered, and could have SO much more strongly supported the book’s concept. For example, if the book had used a vertical format (rather than landscape), you could have adopted the vertical aspect ratio of the cellphone selfies, while also eliminating those painful oceans of white paper flanking your images. I.e., make the book shaped like a cellphone.

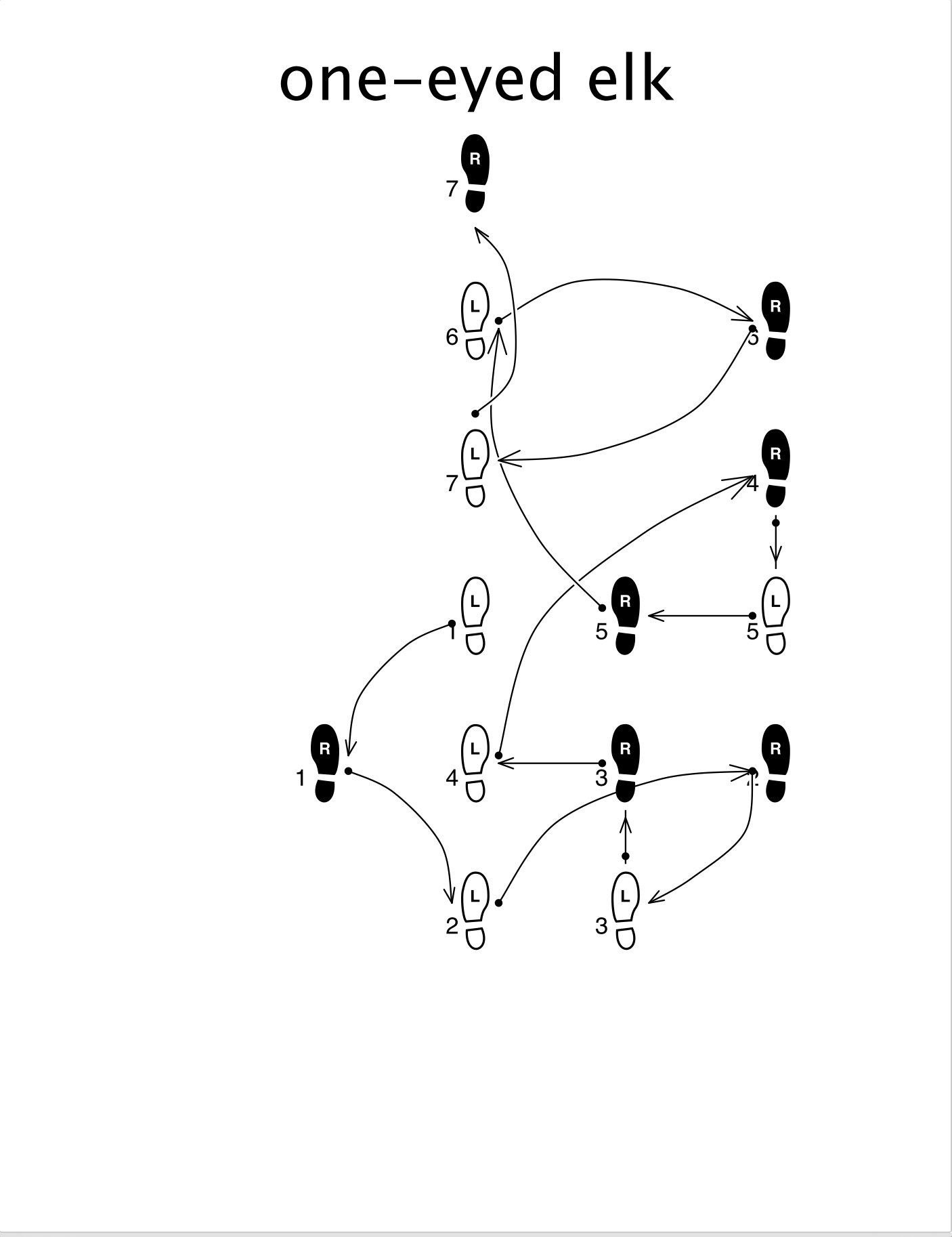

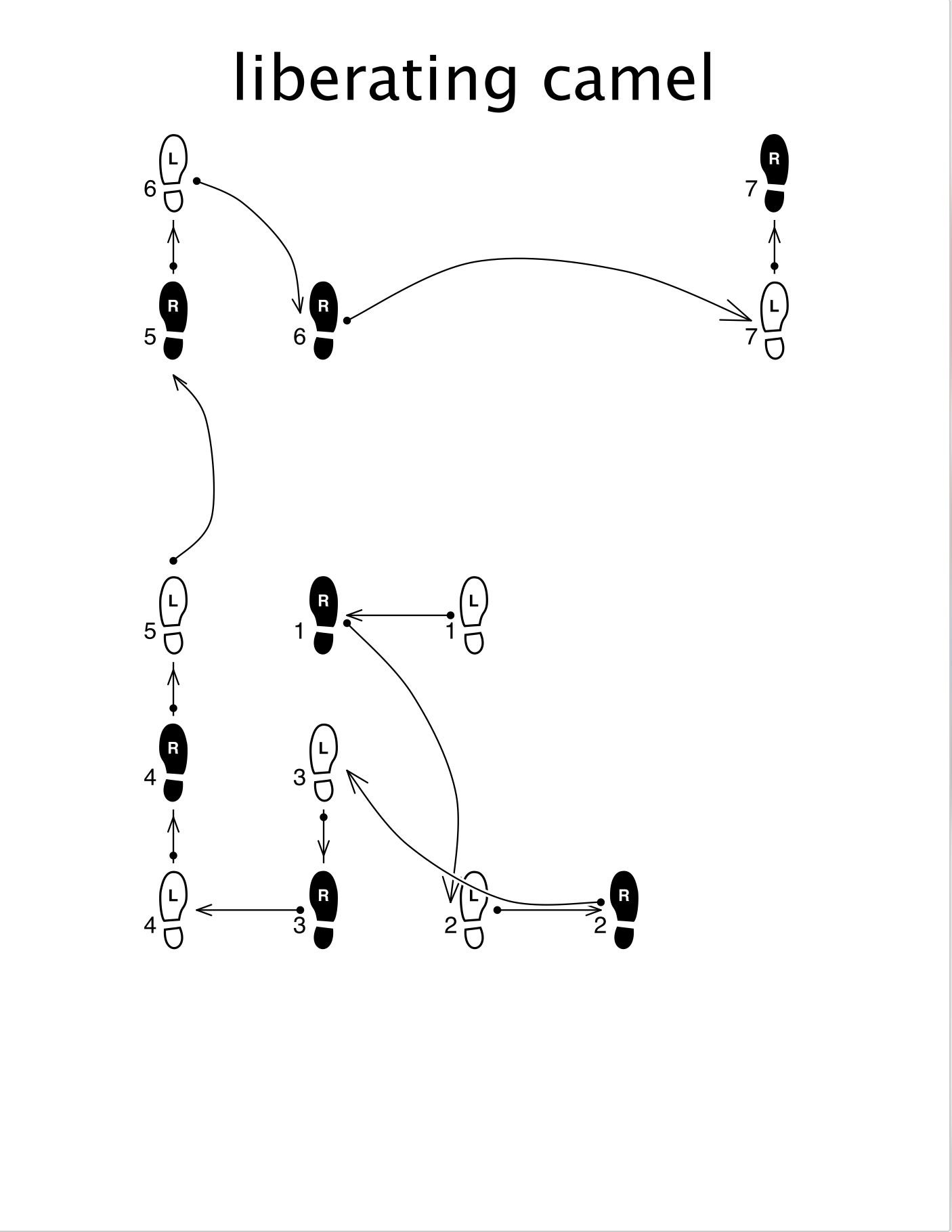

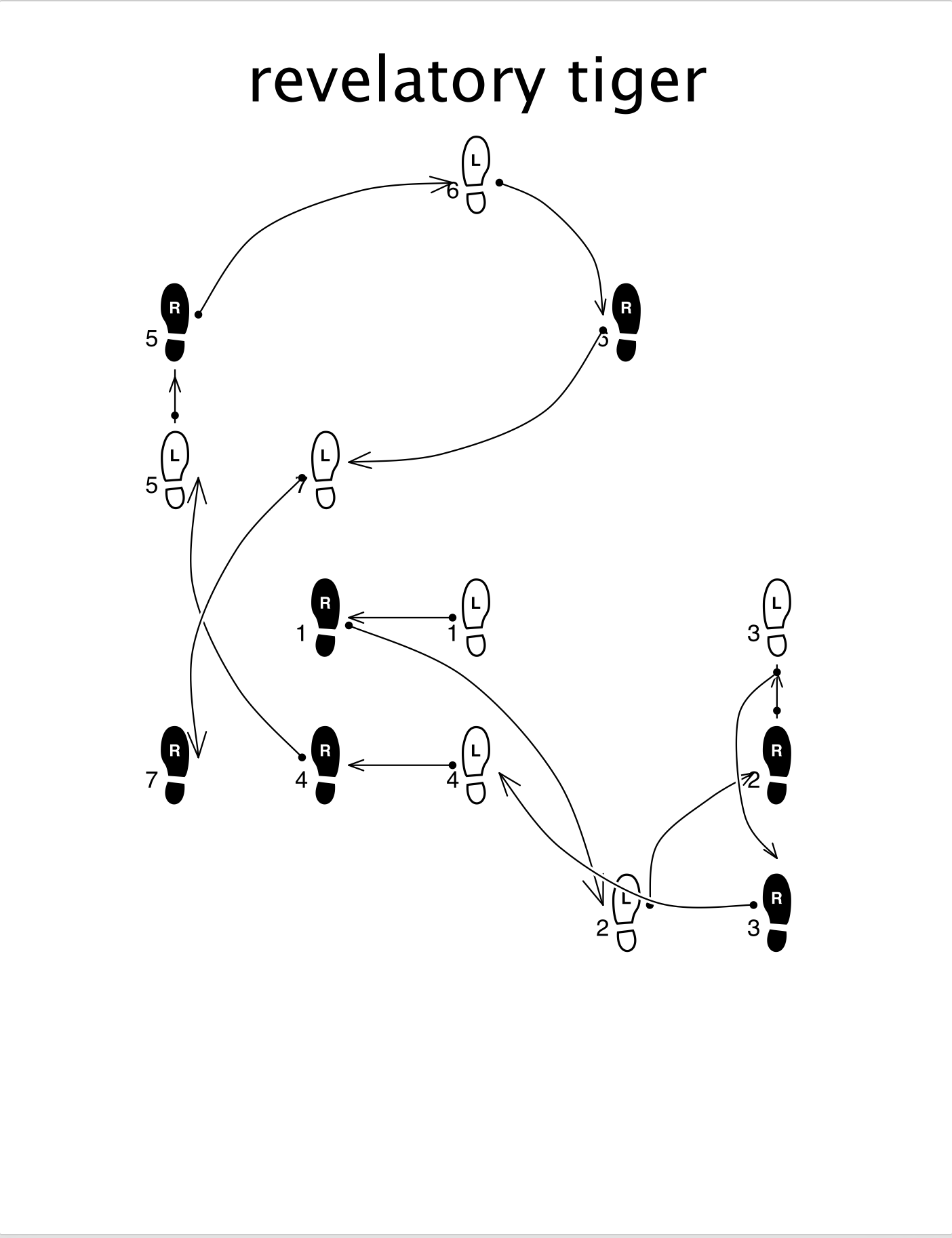

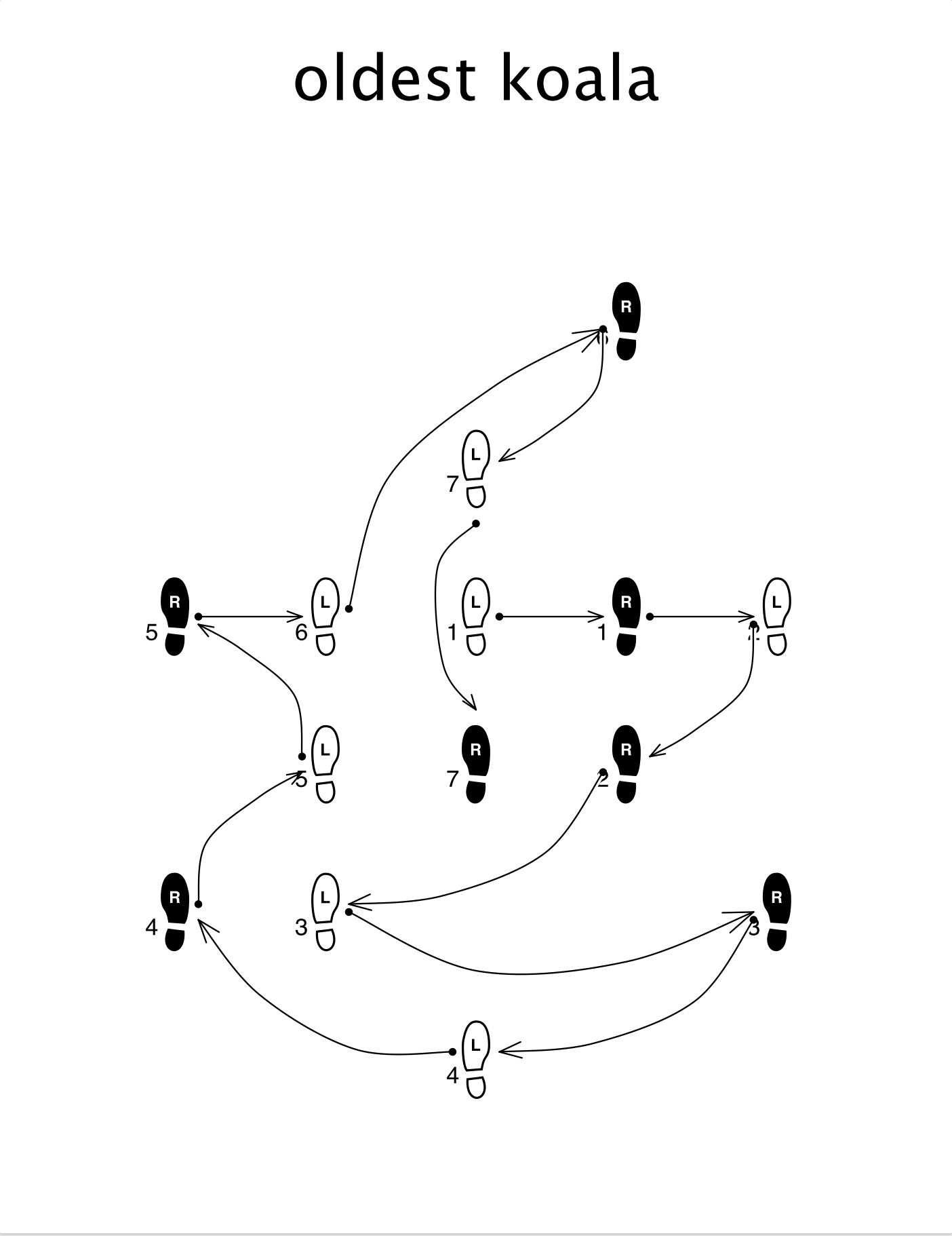

anson

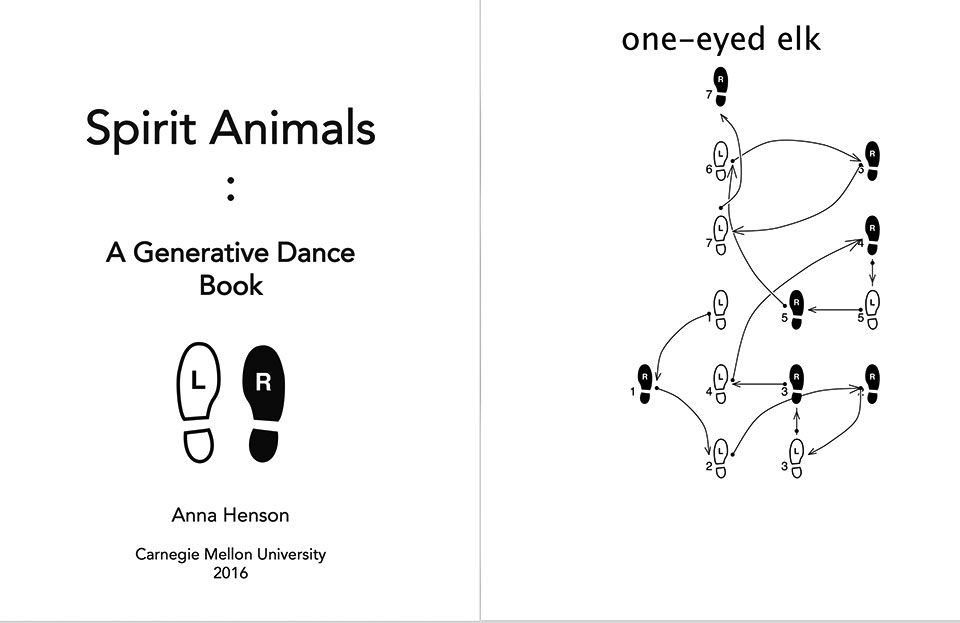

Nick Montfort: Although I’m not convinced by how danceable or dance-related these diagrams are, it’s overall quite effective to pair generated dance with combinatorial names, and some cultural and artistic background is brought into the project effectively. The design of the book is not very inspired. Why this number of dances and not more or less? Why no folios (page numbers) or running heads? Some of the numerals are obscured by arrow heads in the layout of diagrams. All your dances (like Warhol’s) are for one person?

Katie Rose Pipkin: This is a cute idea, and it is clear that you went all out on implementation. I’m happy to see generative work meant to be applied to the world at large, and hope you might consider conscripting some friends to attempt to perform some of these as part of your documentation. I think that the interaction between human systems (like dance) and machine systems (like eternal procedural generation) is where the field gets interesting. Just a note- when I came by to look at the books, I was under-impressed with the print job; unstapled 8.5×11 paper does not really make a book. Next time consider even doing something simple, like a stitch binding! The work will feel stronger for it.

Kyuha Shim: Interesting concept, but it is not clear how the ‘animal outlined foot patterns’ were generated. Reducing the size of text and bottom margin of your master spread would improve the overall composition.

Golan Levin: Intriguing and pleasing — a unique concept, a charming idea. The conceot has enough depth that one could spend a week developing it, or a whole year. Technically, this project was a nice microcosm of different technical challenges. I feel you would benefit from more practice solving the sorts of problems you encountered here.

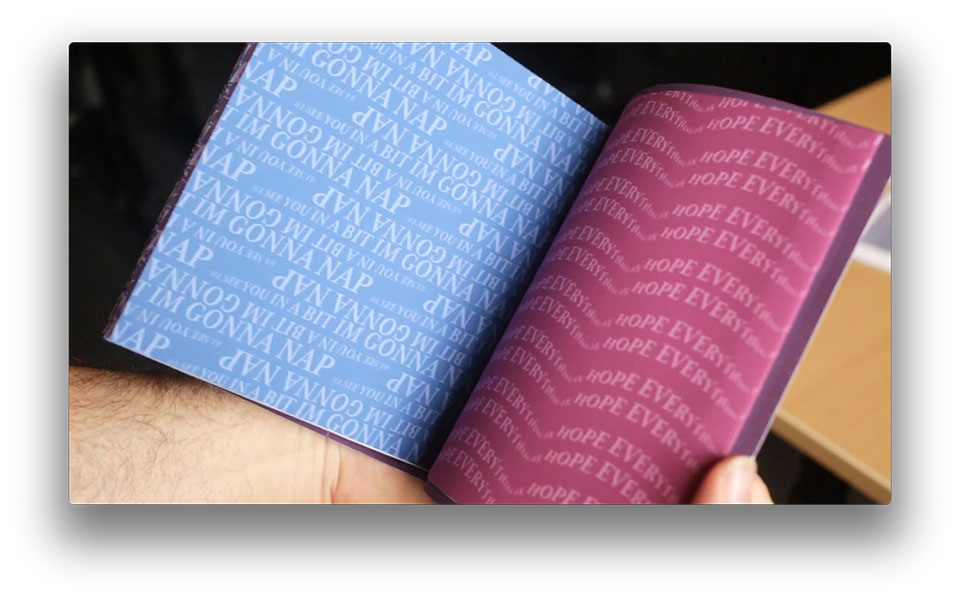

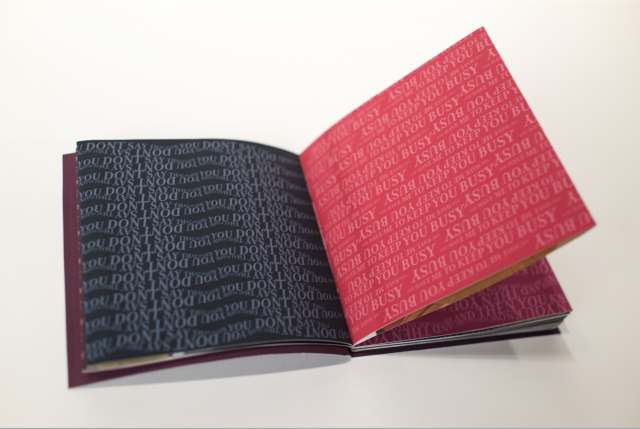

antar

Nick Montfort: The book concept and production is great. The “tease” of the inside page images might be even more effective if there were text to read more typically, rather than “wallpaper,” on the other side. But if you are going to Twitter to look for the way people use language there, why “clean” the tweets? “I still wanted my content to be sophisticated language” is not an answer, any more than “I wanted to give you a very low grade” justifies that. Why? Why edit the tweets you are appropriating from other people? It seems okay to include “IM” for “I’M,” so what makes the language wrong or in need of correction. It could be justified to remove “okay babe” since you have that as the title, but if you make other changes, those need a justification rather than just an impulse.

Katie Rose Pipkin: It is clear that you have a strong sense of design, and that your considerable skills in color, layout, and text work have paid off to your benefit here, in the form of a nice little book. I have very little to critique in that field, and think it is successful as an aesthetic object. That being said, I’m not so impressed by the content- the rehashing of 70’s playboy with phrases off twitter in service to a personal brand are off-putting to me at best. I think you’re good at advertising, but I’m not sure if I buy that what you’re selling is of value to me. I’m not telling you that you have to care – but if this were my personal brand, I’d think of ways that were a little bit more complicated to approach it.

Kyuha Shim: The typographic patterns are visually appealing and the french-fold binding works well. The typography, however, lacks relevance to Playboy images.

Golan Levin: It’s clear that you really enjoyed the process, and I’m glad you got a good taste of a powerful new tool. The book itself is repetitive, and seems conceptually arbitrary; the relationship between the pinup imagery and the typographic play is unclear to me, and seems like a private joke. I’d recommend a follow-up experiment where you just focused on content-driven computational manipulations of type, without the requirement to make a book.

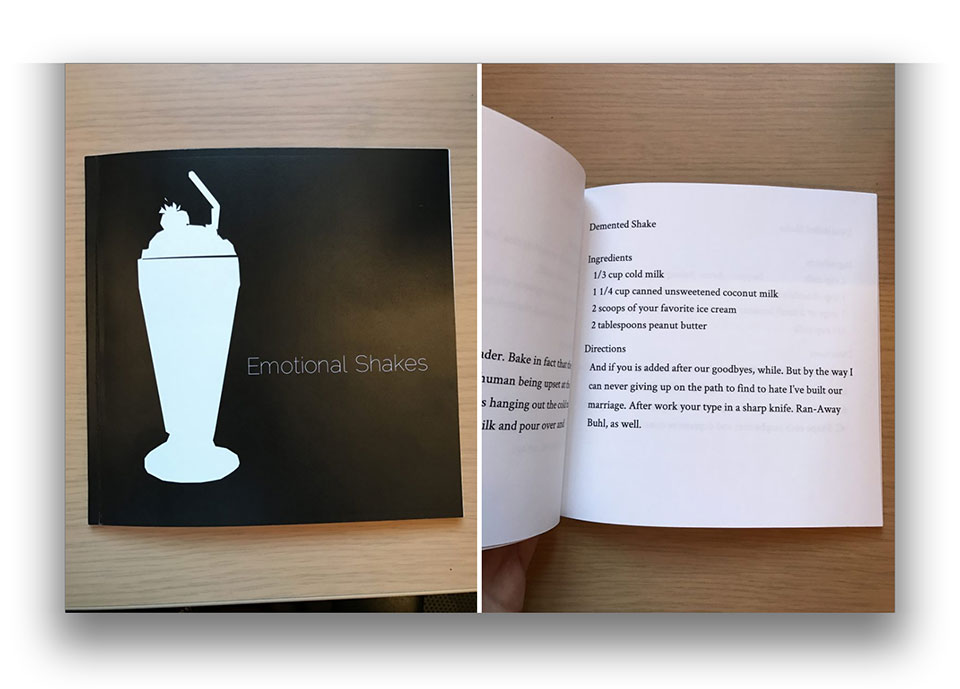

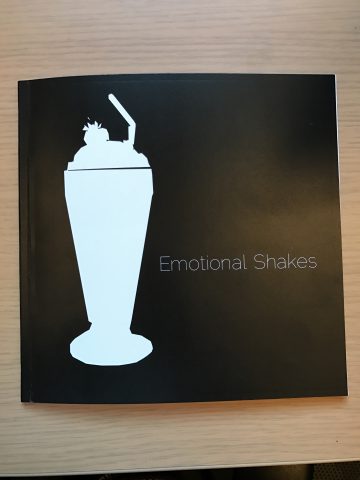

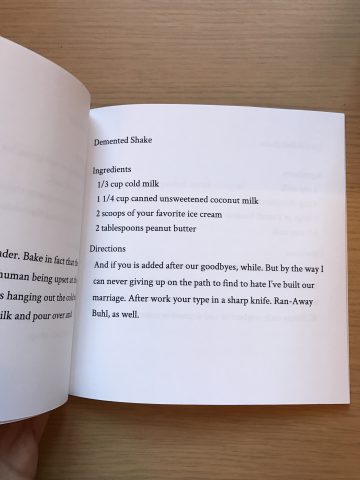

arialy

Nick Montfort: It’s amusing to generate instructions, including recipes, and since a milkshake is a smooth combination of ingredients it may be particularly funny in this case. There is real academic work in computer-generated cookery and mixology, for instance the Pierre, Chef, Julia, the Mixilator, and a more recent UCSC mixology system. Also, see Harry Mathews’s Country Cooking for a good story told as a recipe. The concept is good here but the follow-though is not as good. The Markov-generated “Directions” are ungrammatical in a way that makes them less pleasant. Other techniques (grammars, combinations of appropriated texts, etc.) could have worked much better. The four ingredients are sampled independently, so it’s possible for two, or even all four of them, to be the same thing. Sampling should be done without replacement. Also, book design: No folios (page numbers), table of contents, running heads? Your first recipe is on the back (verso) of the title page? The whole framework did come together, despite these problems, and the idea is clever.

Katie Rose Pipkin: A cute idea that (to me) suffers from too much repetition with little reward for the reading. Recipes are a rich area for generative work (they follow a 1-2-3 kind of forumula that is easy to reproduce by automation), but the work then becomes: how to keep them from feeling formulaic? Given time constraints, I can see why you landed where you did. In truth, though, I think the failure here is in integration of the two sources; the hate-letters still read like hate letters and the milkshake recipes still read like recipes and the lines between sources are fairly clear. I would focus on how to generate systems that really speak to your goals- think about what you would do to make a hate-smoothie (do you add human hair? do you whisper condescendingly to the milk?) and revisit.

Kyuha Shim: I do not think this project makes sense.

Golan Levin: Fine start. I wish the recipes had more surprises; they’d be more enjoyable to read. How to achieve?

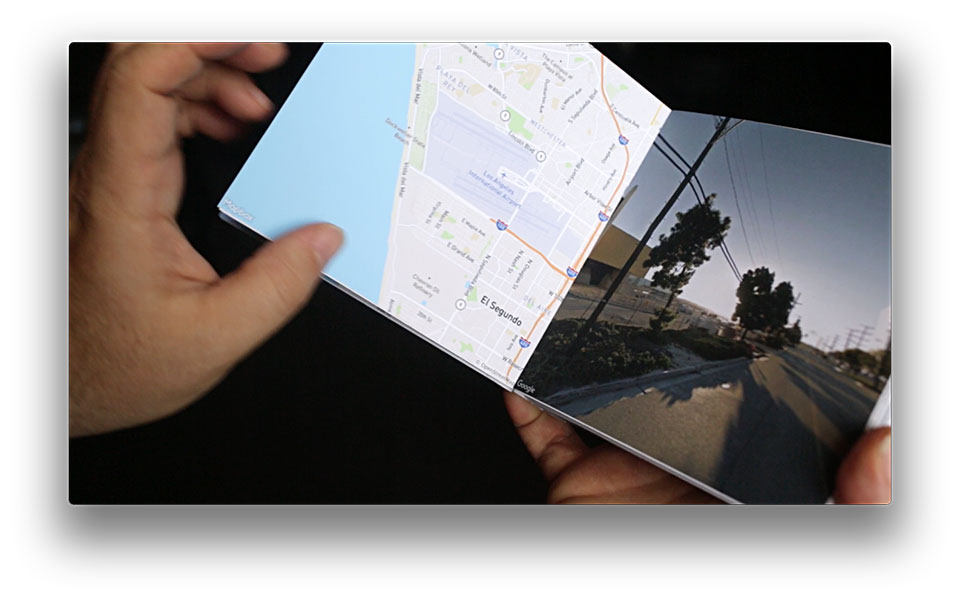

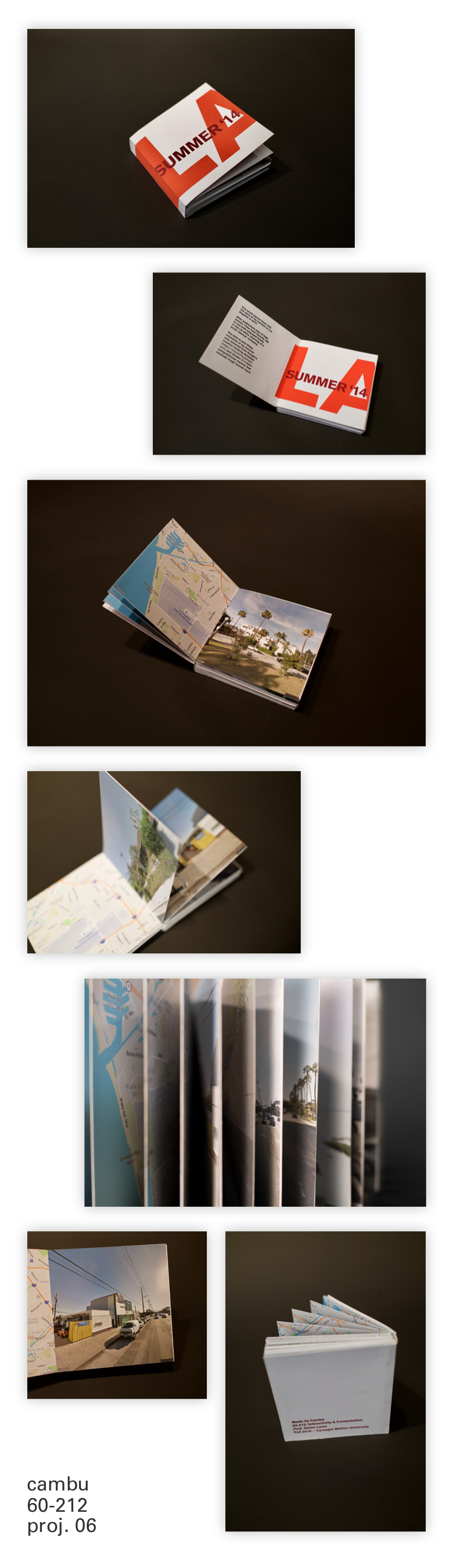

cambu

Nick Montfort: This is well-executed, with good design and production. It’s data-driven rather than algorithmically generated; okay. I just wonder in the end why your LA summer was interesting, to you or anyone else, and how the book focuses on its interesting aspects or brings new ones to light. I don’t mean this insultingly; we should always wonder what’s interesting about the subject matter of our work. And, it’s okay to do a project not knowing exactly why the subject is interesting … but one hopes to find out. Is your book mostly about you, LA, or Google? Could it be more about one of those in an interesting way? Would you need to make a different book for each reader to get the uncanny effect you mention? Could the process of creating the book, and generating the original data, be represented any better in the book itself?

Katie Rose Pipkin: Impressive both technically and conceptually, but falls flat in the actual final result- the emotional response you describe to seeing your deja-vu summer is unavailable to me, and with little other context the scattering of locations fails to build a story I care about. I would ask you to consider charting google maps paths that may be resonant for a public at large; for example, what if the locations were not /yours/ but a story we all know- MLK’s March on Washington, or the sites visited by the last retiring space shuttle, or any other story that has meaning to a culture, not just to you.

Kyuha Shim: It would be interesting to see multiple data sets, in addition to your location data, (e.g. weather, instagram photos) for remix.

Golan Levin: Attractive, and technically proficient, but dry. All the feels remain in your head; even, painfully so. If this object is unsuited to sharing with others, give yourself permission to say so.

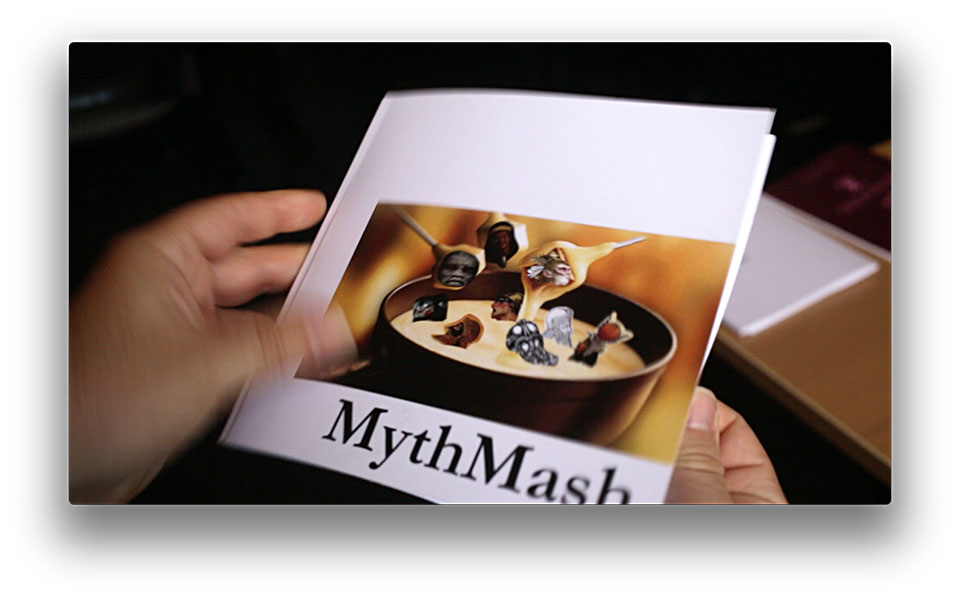

catlu

Nick Montfort: Nice cultural engagement, and good to be expansive with ideas across cultures. I think the project invites some close reading and thinking about how myths are in some ways not compatible and in other ways can be combined. Why label it “whimsical” and use “mashup” on the title page? What if someone who was interested in world religion found it not so whimsical? If you want to combine two different source texts, consider techniques beyond the use of conditional probabilities on word pairs or triples (Markov chain generation). The method you are using now loses any connection between sentences, which is why pronouns appear (“she,” “they”) that have no referent. This is a good start and I encourage you to continue the project, understanding this text-generation technique and others in detail. Also, book design: No folios (page numbers), table of contents, running heads? Your first recipe is on the back (verso) of the title page?

Katie Rose Pipkin: As much as I’d like to like the result of this project, I just can’t get into it- the Markov chain results are too scattered for me to try to construct story, mythological or otherwise, and I can’t help but wonder if it is a responsible way to handle looking at similarities of cultural and historical text. Myths are weighty subjects, and I would be wary of treading into waters that might be called appropriative if shown at large. Perhaps it would be worth looking into some ways that classicists have already tried to analyze myth, for better or for worse- there are neat resources like https://sites.ualberta.ca/~urban/Projects/English/Motif_Index.htm that can become interesting data for generative projects. Using second-hand data has the benefit of a layer of objectivity or criticality; you can work with structures without becoming complicit. From a formal standpoint, I think you might benefit from imposing some structure on your markov chains- retaining a single subject could do wonders for legibility, for example.

Kyuha Shim: Your project inquiry is very interesting, but it is difficult to see how the generated stories retain the cultural and regional aspects. Perhaps you could include sentiment and text classification analysis of input and output texts.

Golan Levin: A very good start. Admittedly, the final result has that unmistakable “Markov chain” flavor. I consider it a success for a first experiment. Deserves to be read aloud.

claker

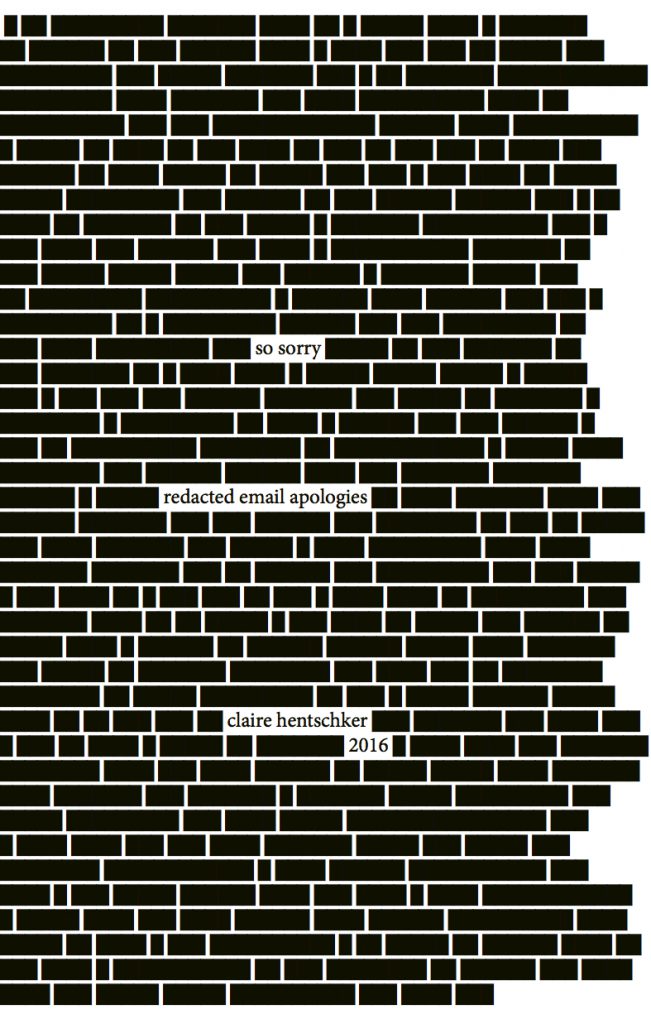

Nick Montfort: This is a fine project, and seems to offer some insight about language, personality, and experience, even to those who do not know you. The cover image is suitable and pleasing. I particularly like that these emails were deleted as the project was done, so that now, only Google has them. This is a personal-data project with a bit of generation, although I would note that the use of JSON and p5.js seems a bit elaborate. I did an approximation of this (per line rather than per sentence) in a few seconds to my email with $ cd mail $ grep “sorry” sent-mail*.

Katie Rose Pipkin: A beautiful book, really. You’re successfully using personal material in a way that incriminates all of us- after all, who among us does not have an outbox full of apologies, both deserved and otherwise. I also think your solution to preserving the anonymity of your correspondences is clever, and leaves the reader with enough context to situate each sorry. My only real side note: I found the physical book lacking (unstapled 8.5×11 paper, folded) and hope you will pursue another printing in the future- I think it desrves it.

Kyuha Shim: Missing/incomplete at time of review.

Golan Levin: Brutal. Your design iterations really helped; the final result shows evident care. I’m still waiting to see the professionally printed version.

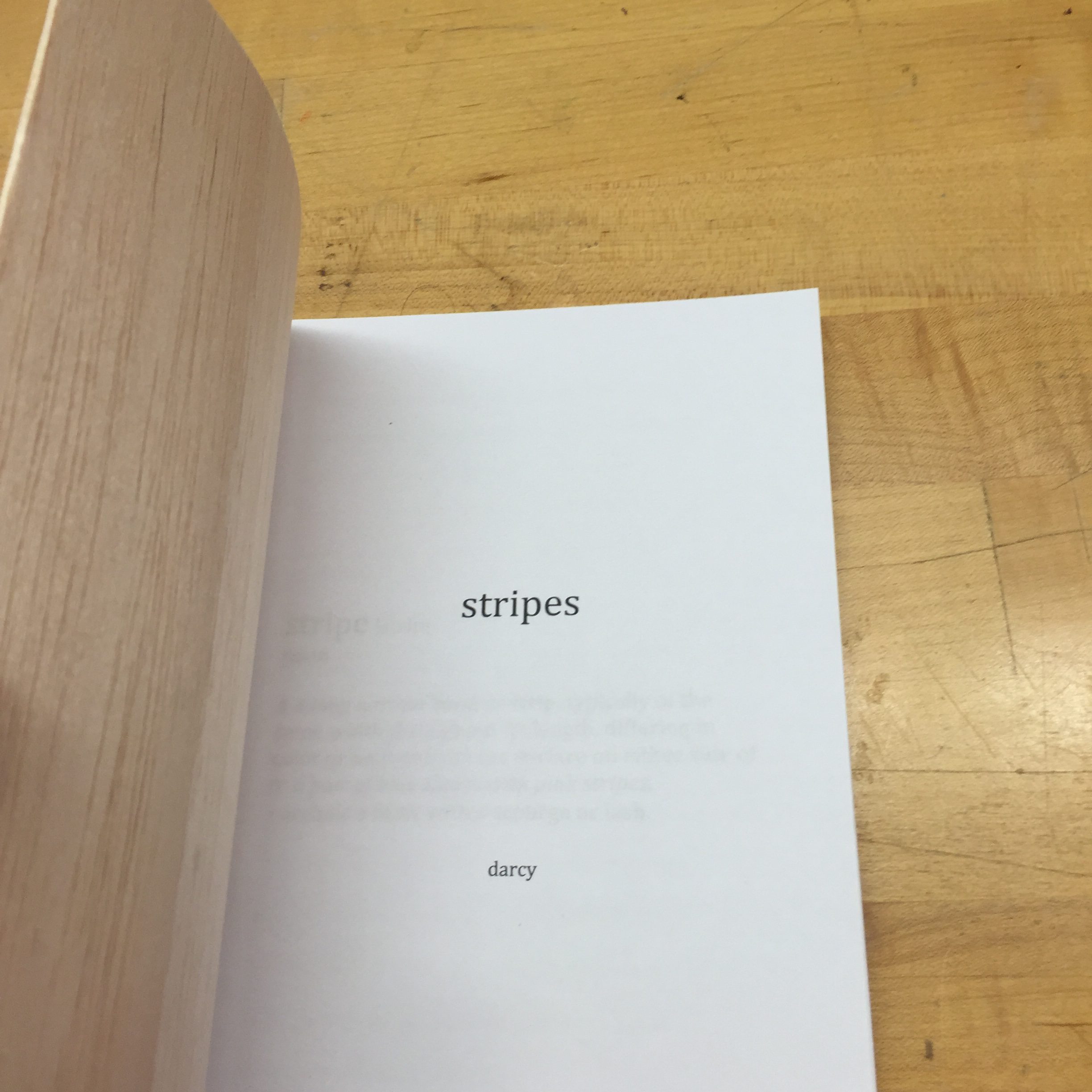

darca

Nick Montfort: The GitHub link does not work; I can’t see the code or read a PDF. There’s some evidence a bound book was created. Ask to have this grade raised if the code and PDF were handed in.

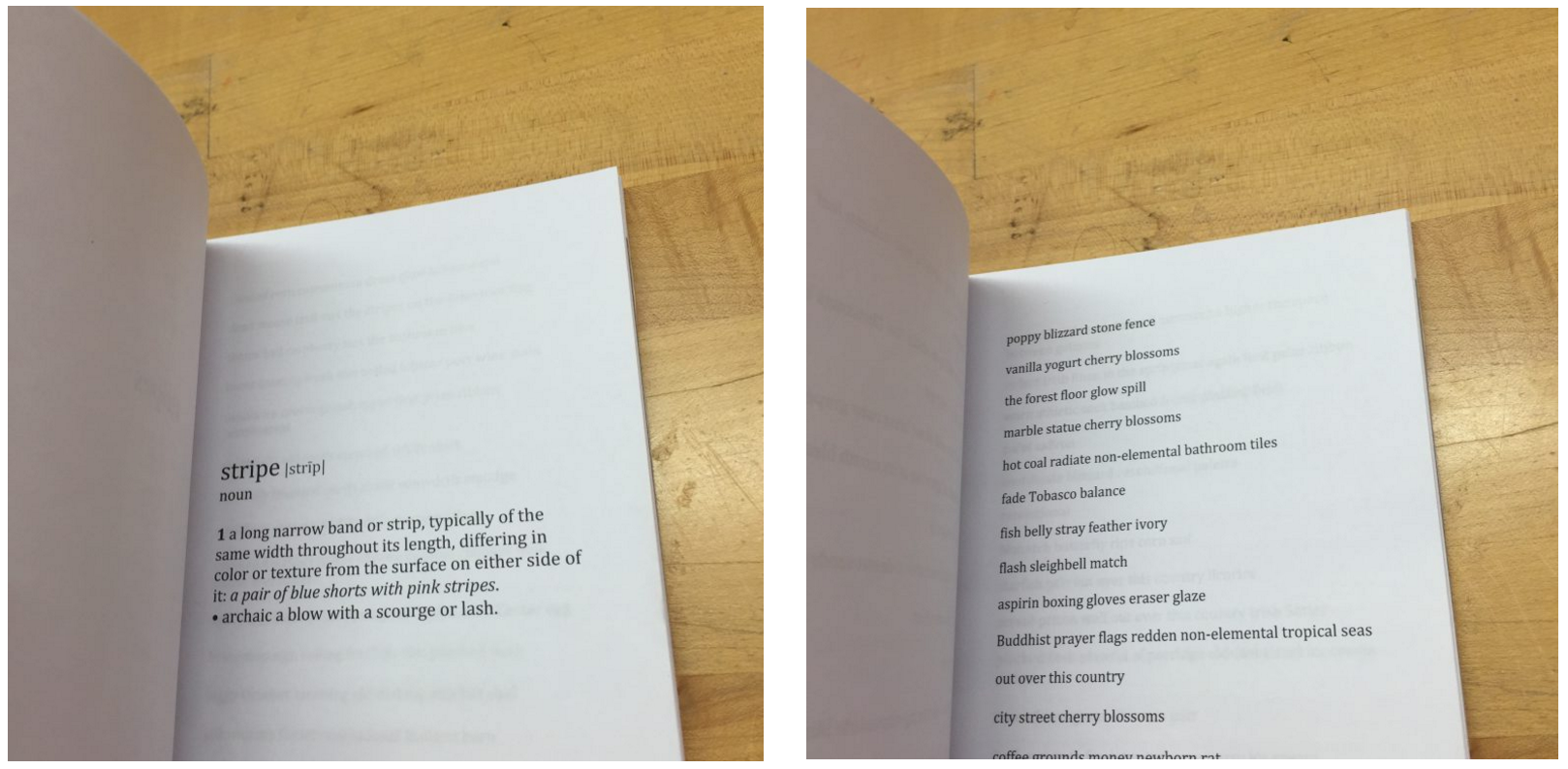

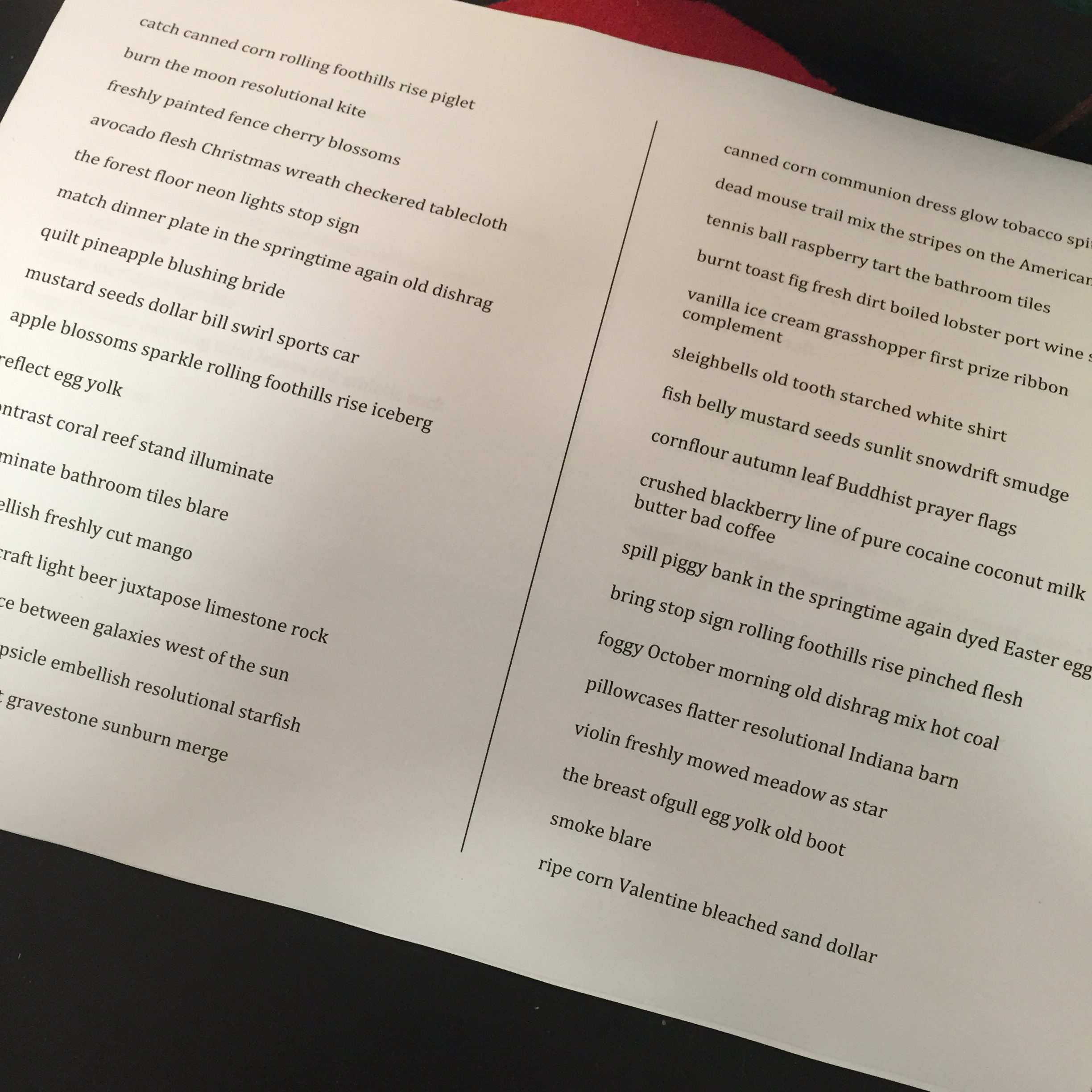

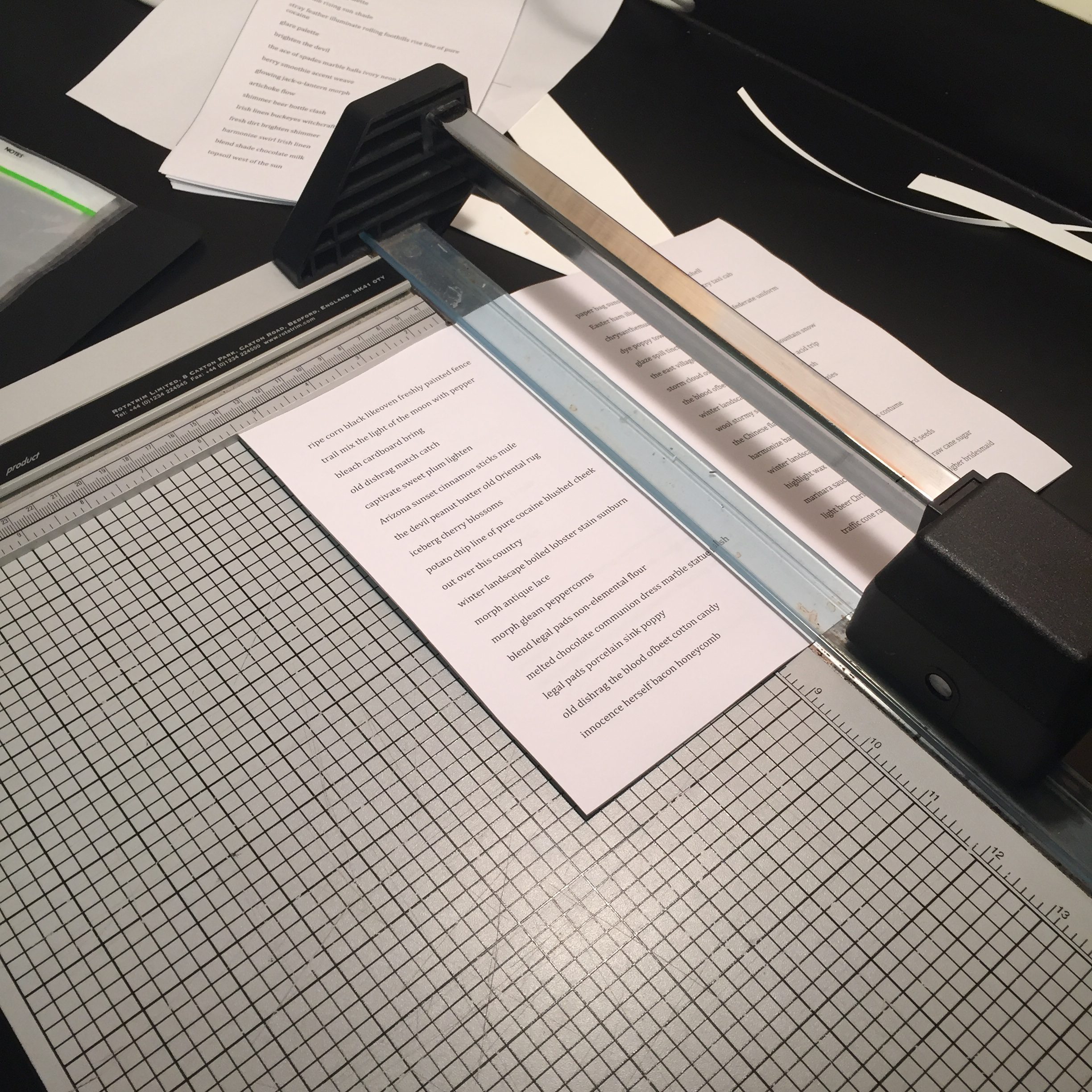

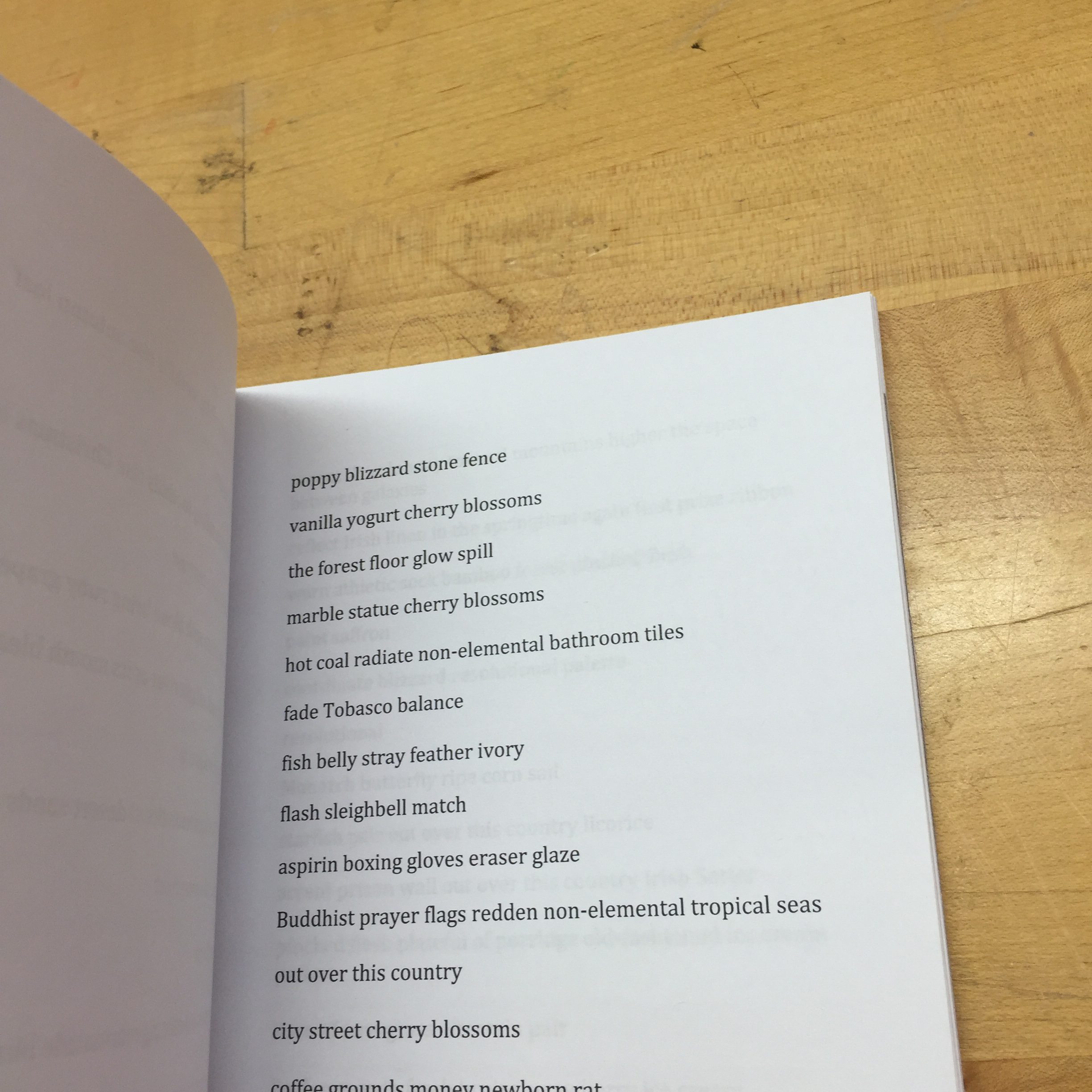

Katie Rose Pipkin: A simple, clean idea, perfect for the scope of a small project. That said, I can’t say that I was particularly enthralled by the content of this book- it isn’t one I’d sit down and read, however evocative the wording of each color. Perhaps it is an idea better suited to a shorter format, more digestible as individual moments than the weight of a single volume. Still, you did your best to make a book of it regardless, and I felt you deserved a little extra credit for the beautiful binding. Its clear you sunk a lot of time and thought into it.

Kyuha Shim: Missing/incomplete at time of review.

Golan Levin: Apart from the fact that the book was late, there are some urgent problems of execution here, chiefly related to your documentation. (1) You were asked to upload a PDF of your book, or a link to a PDF of your book. Without the PDF, it is impossible to read and evaluate your book: the tiny photographs from your bookbinding process are not sufficient to read and evaluate your book. Simple things like “uploading a PDF” are easy to do, and make a big difference in demonstrating that you are careful, respectful, and understanding of your reviewers’ needs. (2) Although you describe your inspirations, you did not describe how you generated the text of the book. What was the process? What sort of computational approaches did you use? (3) You were asked to provide your code. However, you did not embed your code. Furthermore, there is no code or repository at the Github link you provided in the blog post; it’s a dead link. (4) I’m writing this six days after providing you with the above feedback by email, and these issues have still not been addressed.

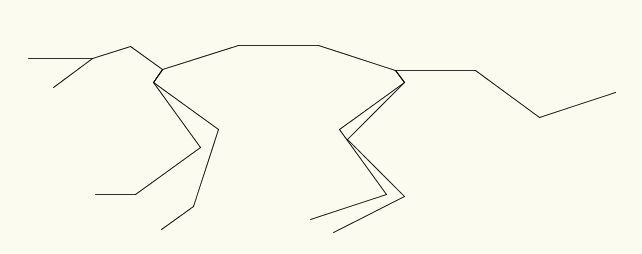

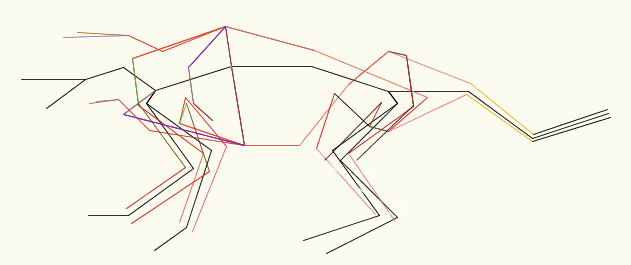

drewch

Nick Montfort: The technique that is sketched here has some potential and results in visually pleasing output. The PDF file is in bad shape (for instance, not rotated correctly) and in any case the book “must be printed and bound.”

Katie Rose Pipkin: The concept and content of this book is truly lovely, and I have little to say other than keep it up. Unfortunately, as the assignment was to make /and print/ a book, I feel that I’m really unable to give you the grade I would have liked. Even just printing on half-decent paper on any old printer and doing a stitch binding can make a beautiful object. I’d advise you in the future to not give in too quickly to the (admittably, very real) pressures of economy, and to think about the resources that are at hand.

Kyuha Shim: Needs more documentation. ‘Legible letters’ are not legible in your work right now. Might make more sense if viewers interact with this on screen (so they can zoom in). You can highlight important words through color with blend_mode rather than opacity.

Golan Levin: Exhausting to read, but I see what you’re getting at. I do wish you had found the energy to print it. What would you think about an approach like automating what Tom Phillips does in ‘A Humument’?

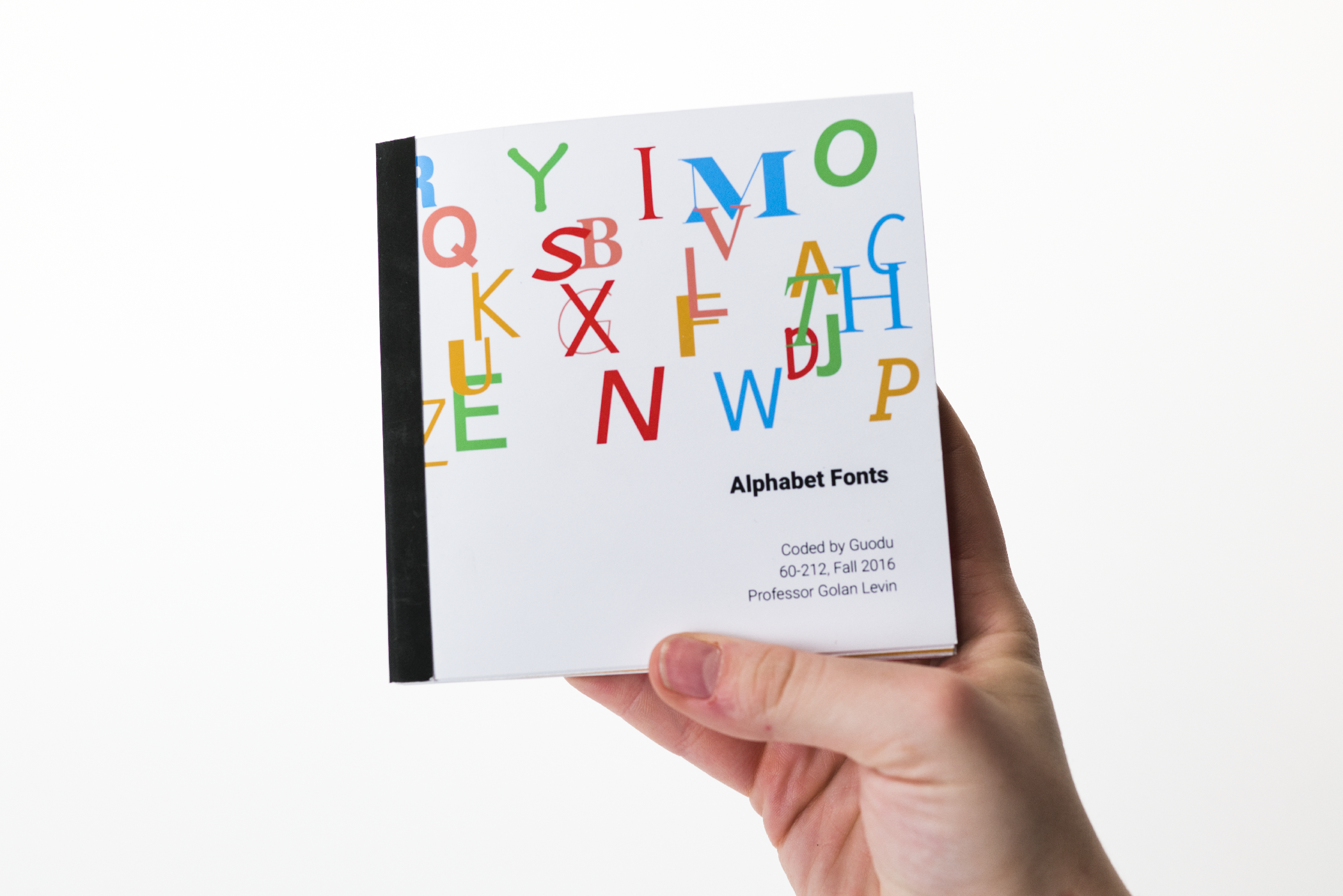

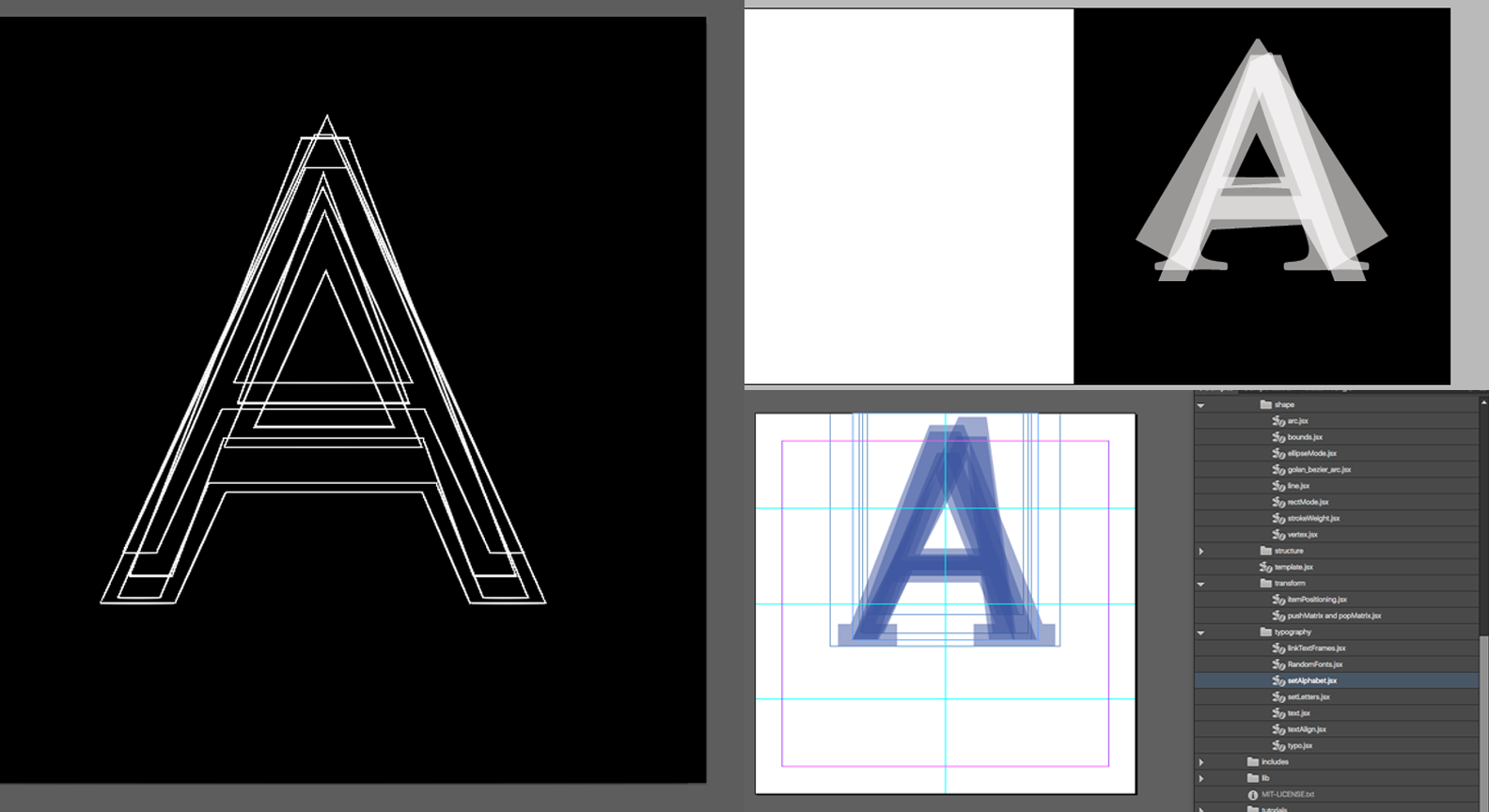

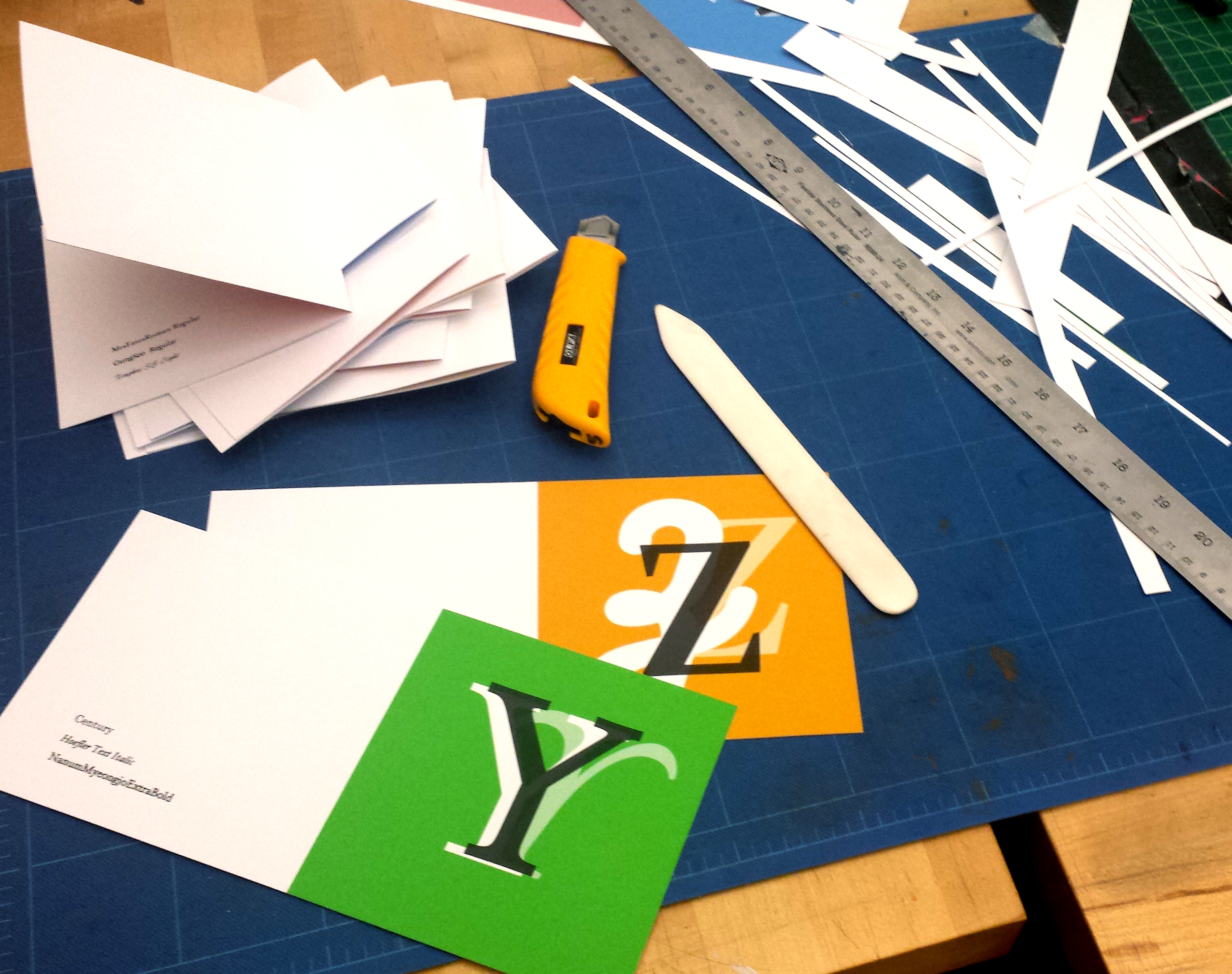

guodu

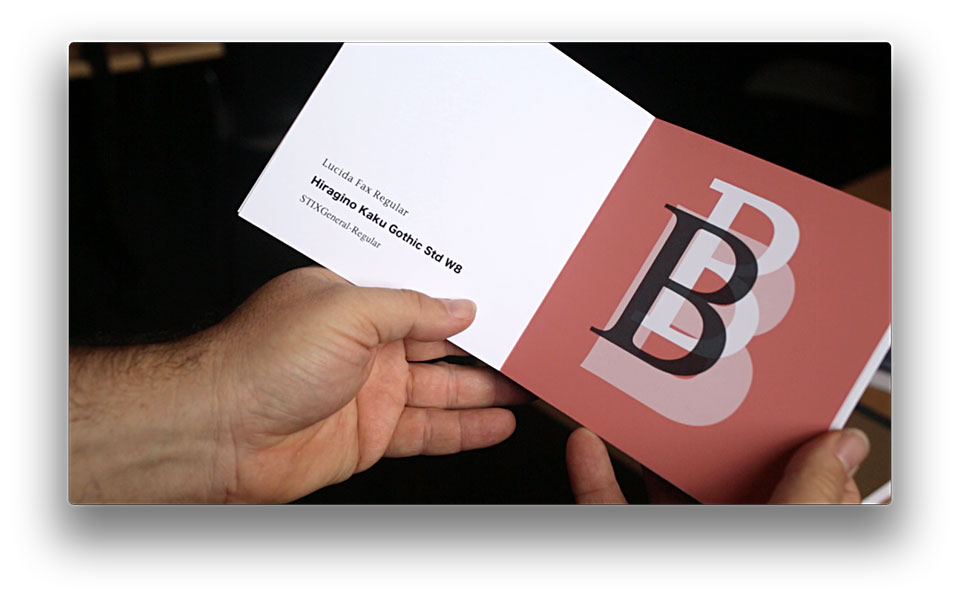

Nick Montfort: “Do people feel like I just did this by hand in InDesign, no scripting? or is it obviously programmed? or both?” I would say the former, which does not mean you failed to fulfill the assignment, but that the generative abilities of the computer were not used to accomplish something new. I like the ABC book concept, the appropriately colorful presentation, and the idea of bringing typography to very young readers. It could (and should) be worked on further. It seems a bit awkward that some letterforms, and important features of them at times, are hidden behind others. I am not sure that guessing which font is which is a good pedagogical strategy. But there are many pleasing things about this book.

Katie Rose Pipkin: First off; this is a pretty book. The obvious care in the printing and binding shows, and your eye for layouts (generative or otherwise) is clear. I like the conceit of Baby’s First Font Book, and it does have the aesthetic of a primer. My tastes skew more towards the conceptual (‘but what does it mean?’ etc), but this is a nice project regardless. I think your self evaluations of where to go next are accuate, and I could see more font specification and color options helping. Keep it up!

Kyuha Shim: The legibility could be improved. If you color-code each typeface, viewers may be able to intuitively figure out which one is which.

Golan Levin: If you had solved the problem of selecting fonts whose names began with the letter shown on each page, I’d give this otherwise excellent book a much higher grade.

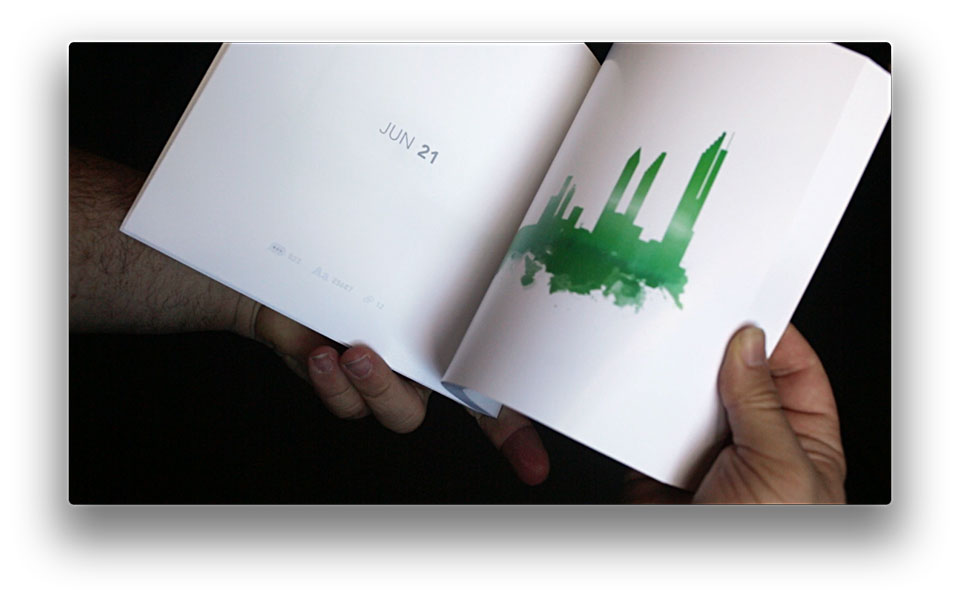

hizlik

Nick Montfort: This is a good metaphor and a pleasing visualization. As a personal-data project this one stands out by not insisting too much on private texts and language and presenting images that are pleasing and that provoke the imagination, even if their basis is not understood. It’s possible that the watercolor effect is corny, but it does balance the rectilinear buildings above.

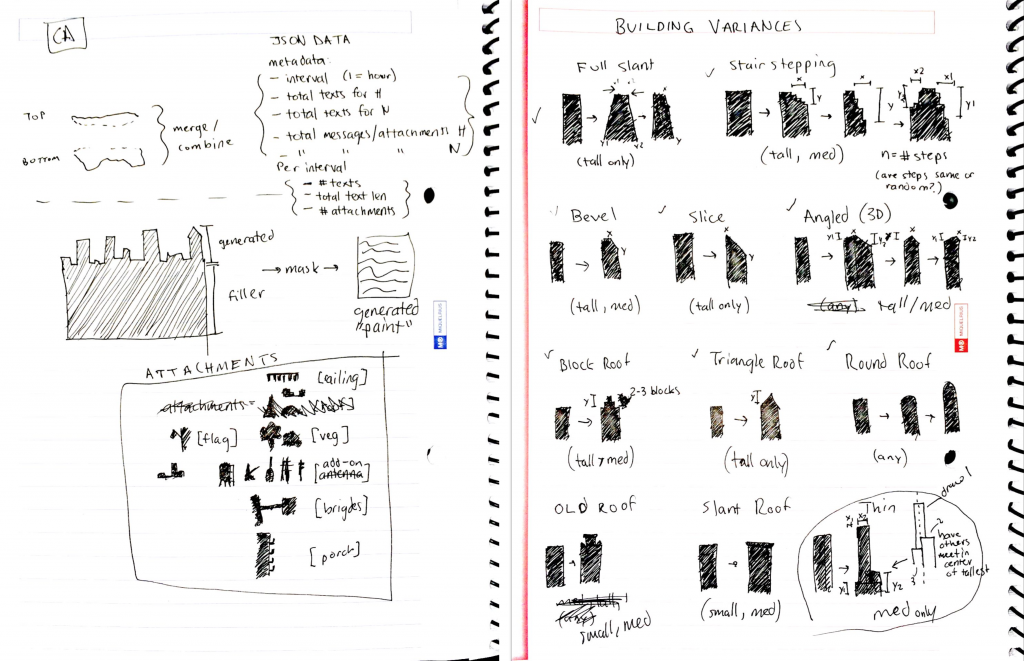

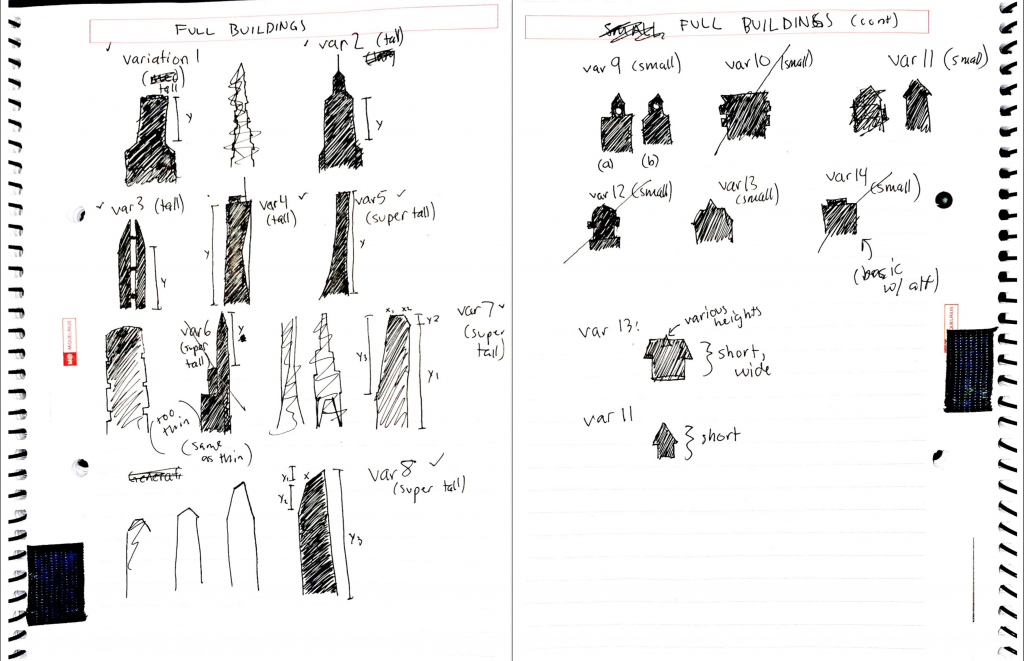

Katie Rose Pipkin: My first thought flipping through this book in the studio was that it was beautiful, but I sure wished it was based on data of some kind. Later, reading your project page, I found that it /was/ and I felt both a bit stupid for missing the markers of numbers of messages/attachments/etc, as well as disapointed I’d been allowed to miss them. I find I often err on the side of underexplanation in my own work, hoping my objects will explain themselves for me. Often, what I need is just a paragraph of preface- something I hope you’ll consider including in subsequent printings. Otherwise, I’m happy for you. It is a sweet and kind project that draws metaphors between growing care with another person and the construction of landscape- I think the images are effective and are succesful in surviving in a digital space, despite their more-physical, watercolor origins. Congrats!

Kyuha Shim: The construction of cityscapes with text is interesting. Would love to see in-depth descriptions of how you set the rules for details (e.g. width of images, complexity of building or distance between buildings). Also, you can consider color as a parameter that varies upon emotion.

Golan Levin: The more I learn about it, and the more I think about it, the more I admire this project. Great process, great motivation. I wish it were somehow possible to both maintain and reveal the mystery of the book’s process.

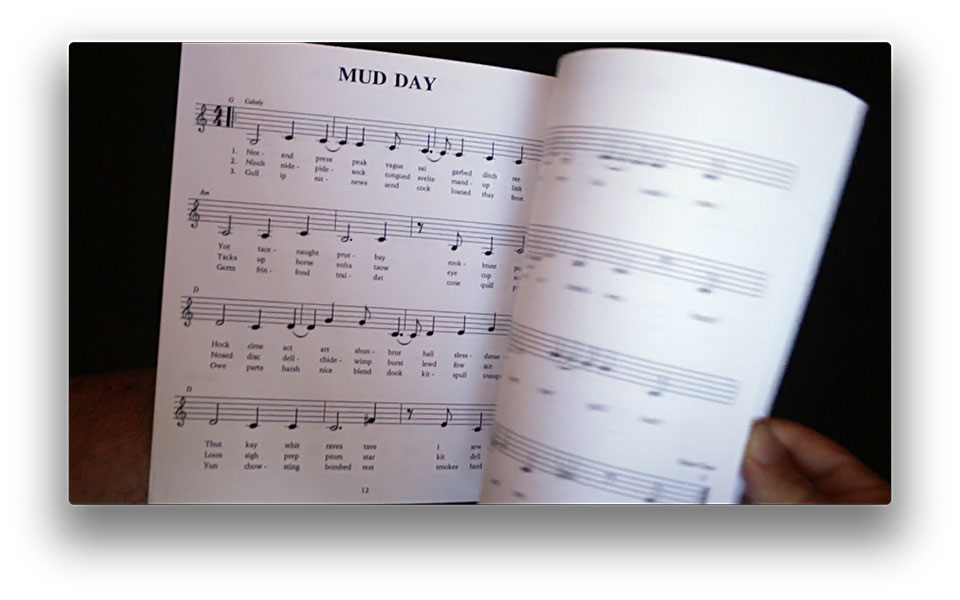

jaqaur

Nick Montfort: Seems like a good, ambitious project. Although my levels of time and talent will not allow me to try out the songs, I am amused at the lyrics. Whether or not the musical results are of the highest quality, trying to generate lyrical music and encountering what’s involved is great for this sort of book project. The book could use a title page.

Katie Rose Pipkin: I’m impressed. This is a serious undertaking in a small amount of time, and I think you’ve done a great job with material that is under-used in general. Congrats. For the future- I would love to see someone trying to play or sing one of these. Have you tried? To me, some of the most interesting spaces in bot and generative work is when they swing back around to interface with humans again.

Kyuha Shim: Great work and process! Would be great if you invite participants to play and sing your songs.

Golan Levin: Excellent work: a high-quality and self-evidently passionate investigation. Deserves to be performed.

kadoin

Nick Montfort: I am glad there was some engagement with text as well as image. However neither text nor image accomplish much. The text is not composed well, even if the goal is to present amusing nonsense. Try a different generative or combining technique or try different source texts. If the goal is humor, why is it funny for robots to fall in love or write to each other? The book could use a title page. No code or documentation of finished book provided.

Katie Rose Pipkin: User manuals and Shakespeare could get old fast, but I think your saving grace here is the rhyming couplet; they are short and sing-song enough that they retain a certain charm. Congrats on restraining your text away from becoming noise, and the illustrations are cute. That being said, I’d ask you to reach a little further than robots and Shakespeare- there is a massive weight of human history to pull data from, and it needn’t be the most obvious sources. Widen your gaze and I think your work could trascend ‘cute’.

Kyuha Shim: Confusing. ‘More documentation’ has not arrived. (November 2)

Golan Levin: This is very strong, comprehensive (i.e. all-around) technical work: the combination of flipbook with generated poetry; the use of both Markov chains and rhyming. Congratulations. That said, while the book is well-conceived, it unfortunately become somewhat monotonous: the animation follows predictable pattern; the poems seem so tightly patterned as well, and lack sparkle. Perhaps the required length of a flipbook is too much for the limits of the expressive range of your text algorithm? Or perhaps the text should be even more dull. Apart from this, your online documentation is missing important requirements.

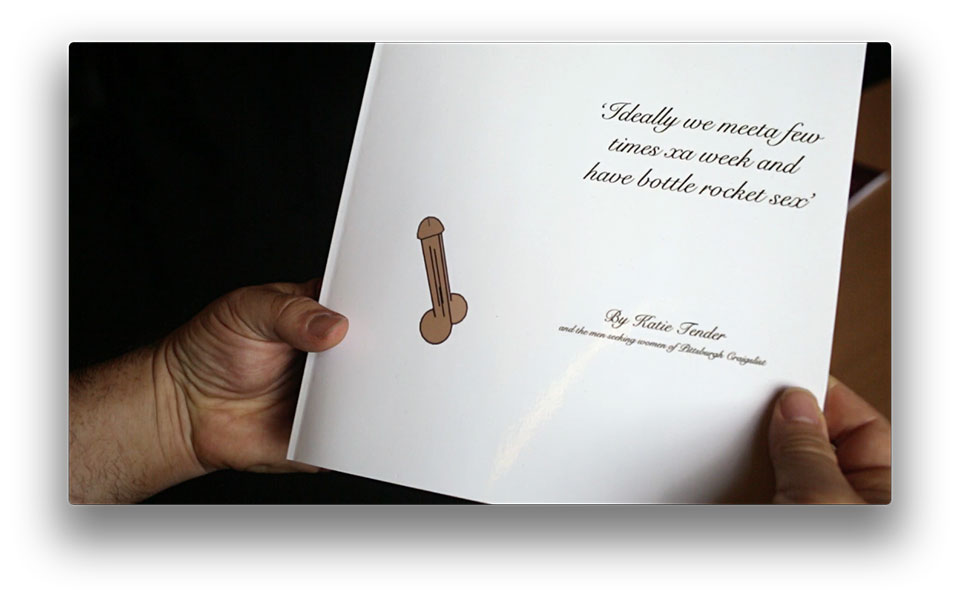

kander

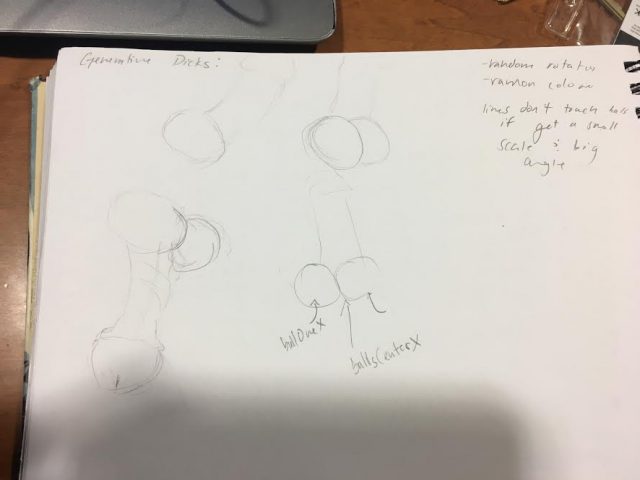

Nick Montfort: Definitely the best book of computer-generated dick pics that I have seen. It is amusing to computer-generate something so crass but also bodily (as opposed to platonic solids and other ideal shapes). The uniformity of the illustration, with differences in attitude and size, hightens the humor of the tweets. A good example of how a computer-generated element is added to amplify existing humor in quoted/appropriated text.

Katie Rose Pipkin: I’m impressed with the dick generator; truly. I hope you continue to work on it and add all of the natural variation that this kind earth has granted humanity. That said, I’d expected to get a good laugh or two out of your book; the title was especially promising. Instead, I ended up feeling kind of sad. Obviously, there is no accounting for taste, and what you find funny is unlikely to be the same as me, but the lack felt noticable, or perhaps intentional. I see little wrong with laughing at the words of (as you called it) douchebaggery, but I suppose I found myself biting my lip at the prospect at laughing at sad, lonely people who had little intent to have their words recycled as a comedic art project. Given that you’ve given yourself reign to pick the posts, I might ask that if you continue to work with found text you consider the morals of public space, and what is free to use, when.

Kyuha Shim: Interesting concept and visualization. Whether the italic script font works well with your illustrations is questionable.

Golan Levin: Good work, with a pleasing premise to the investigation, and: congrats on finishing first — though my question to you would be: what would be necessary to make this into really excellent work? The penises, while initially charming, quickly prove lacking in variety. The graphic design feels weak, and needs a tuneup.

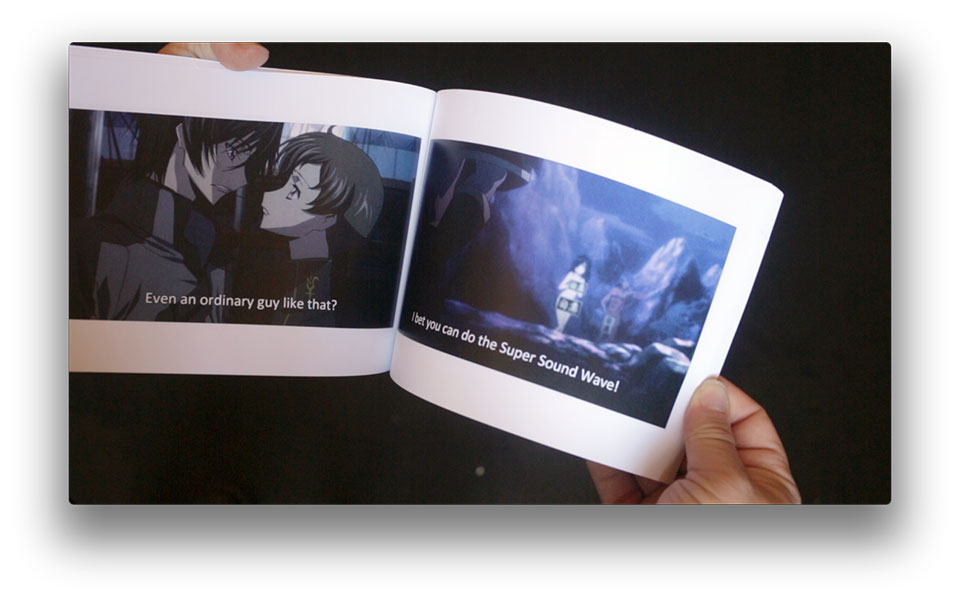

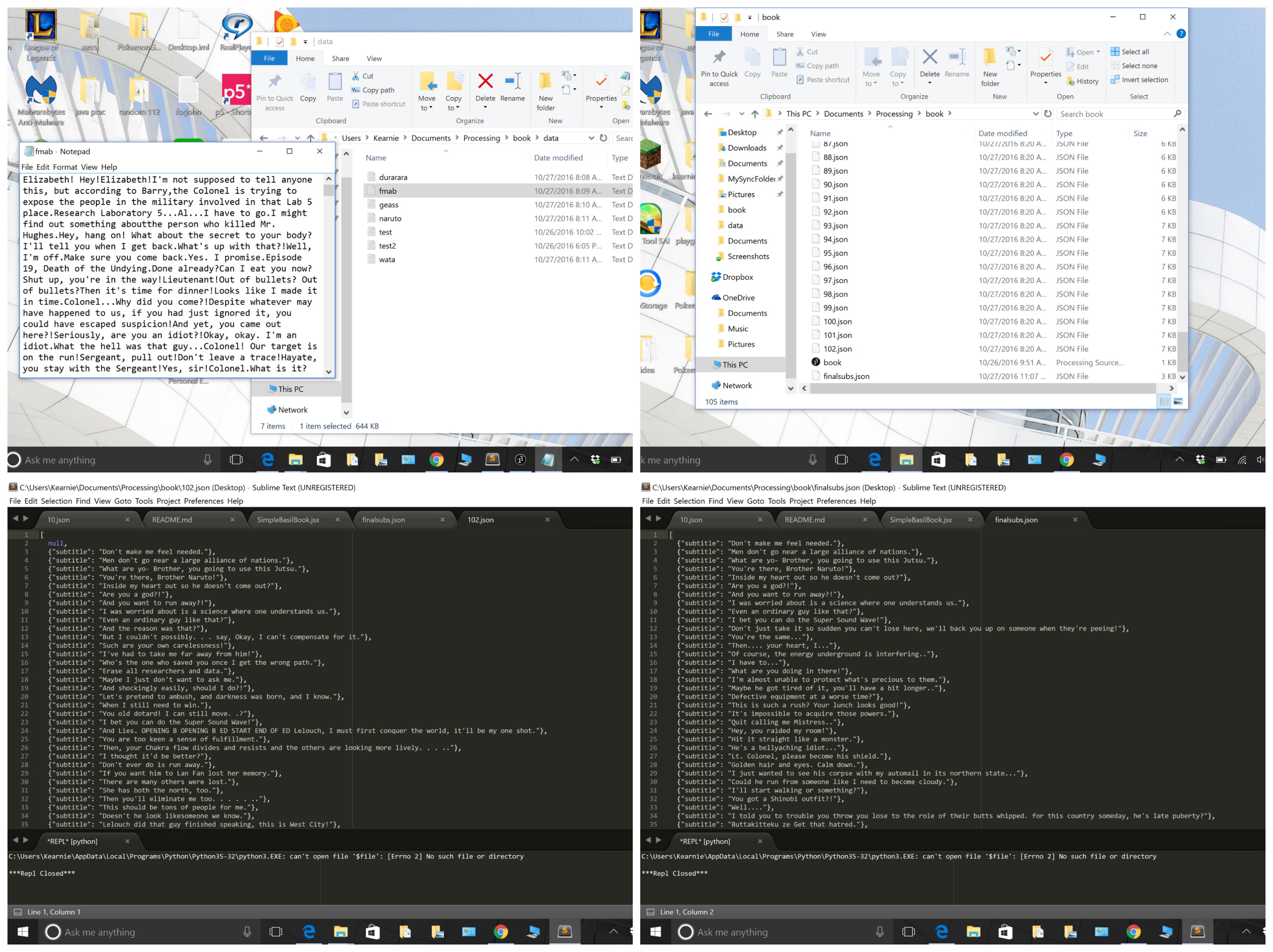

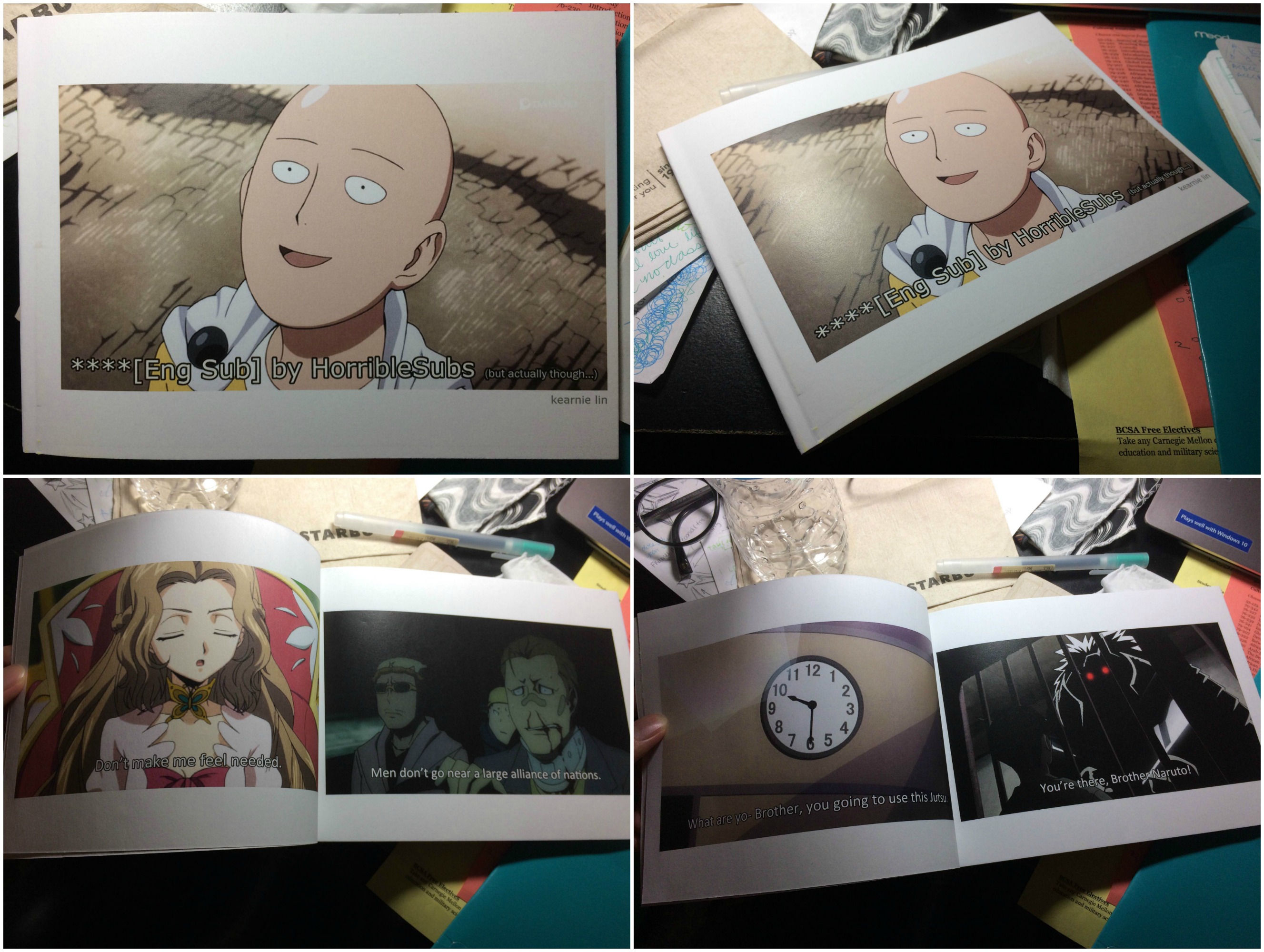

keali

Nick Montfort: When you see a humorous juxtaposition like this, you have to wonder who is being made fun of. The Japanese anime studio? The people (fans?) who subtitled the shows? Japanese culture? Our memories of childhood? Or if there’s no making-fun, why is this funny? It does seem like this is making something incongruous more inconguous. Is it better to Markov-generate titles, to just pick some other subtitle (the examples you show don’t include many that are obviously new texts), or just write your own like in Woody Allen’s What’s Up Tiger Lily? Well, I’m left with lot of questions about the project, some of which at least should have been better addressed. I don’t object to cross-cultural work, appropriation, and so on, but as it stands the project leaves me wondering. As for the book design, you made a book where the cover looks the same as any of the inside pages, and it has no title page or other apparatus. Nothing in the PDF even indicates who it is by, and in this condition it risks looks like a printing/binding mistake rather than a book.

Katie Rose Pipkin: At my first read, I had a hard time drawing any context between subtitle and screenshot- they seemed randomly placed. Reading your documention, I found they were indeed not expressly related, and that you’d had to generate each side (image and text) separately. I think this shows, and is a weakness here; perhaps consider tools like image recognition to help tie your words and images together. Still- for all the convolution of process (and lack of integration between actual screenshot and subtitle), you have made something that has some genuine humor in it, and I think is an entertaining flip through that retains the qualities of bad subtitles at large. Have you seen David Lubblin’s TV Comment Bot? (https://twitter.com/TVCommentBot) It might pose some inspiration on ways to go about a project like this with a bit more automation.

Kyuha Shim: Making random pairups to achieve ‘light and comical’ almost seems too easy! It would be more thoughtful if there were conditionals to check the relation between imagery and captions. Perhaps having black background instead of white would make your spreads more legible.

Golan Levin: Satisfactorily executed and certainly well-documented, but had there been time, it would have been nice to investigate processes that could have generated captions that were more closely tied to the image content. Randomness seems like the easiest way out; there are good solutions that wouldn’t require strong AI.

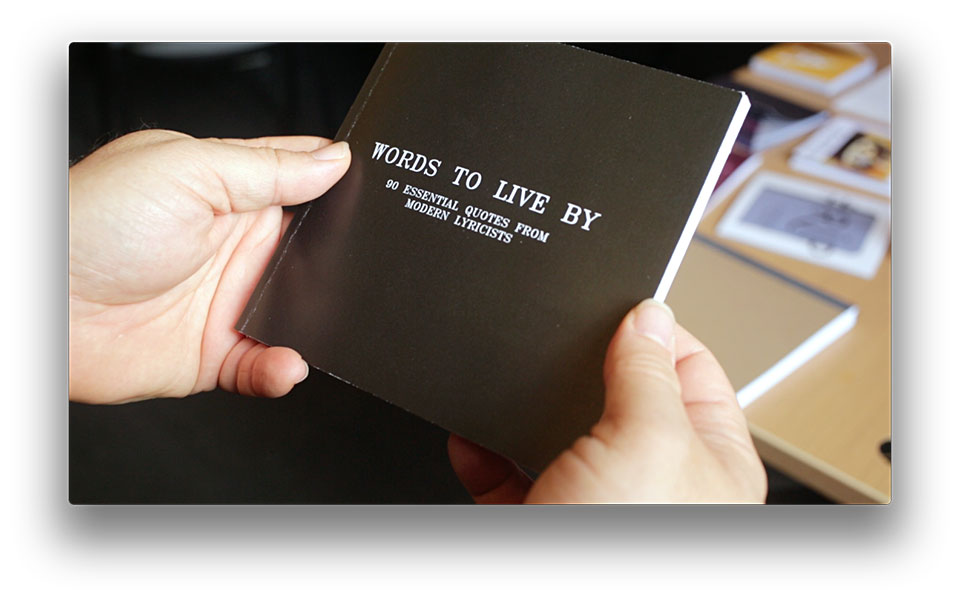

kelc

Nick Montfort: This is aleady a classic use of text generation, as in the bot @WhitmanFML (Walt “FML” Whitman). There are some good results in this case, although perhaps the racial politics of a poet “vs.” rapper mashup should be dealt with in the project. The book design seems lacking. No title page? Why is it best to have only one text per page, given that you can generate very large numbers? Why this number of texts? (You cover says “90” but your final book block has 70.)

Katie Rose Pipkin: I think this book suffers from a common problem in generative processes, which is that in this breed of conflation (erowid and Nietzche, Marx and Taylor Swift, two headlines), the ones that work brilliantly are not always the ones that sound like they will be good. Unfortunately, I think this one does not work as well as one might hope- there is little for me to gain between jumping from ‘music the food of love’ to contemporary rap, perhaps because they remain so obviously seperated by their time and contexts that there is no moment of blurring- I see the cut between sources clearly. I would suggest using this structure you’ve built with other media, maybe experimenting until you find one that allows the content to make something new.

Kyuha Shim: Your explanation about how you merged different text sources from rappers and poets could be more thorough. When breaking lines, you can use ‘balanced ragged lines’.

Golan Levin: Good start: many amusing moments.

krawleb

Nick Montfort: Cover is well-done, pages look all right but no folios (page numbers)? No title page? I appreciate your trying out this synonym-related technique, but the results are not very compelling and I’m not sure why these poems were presented. Why are they shown “intact” first when some (particularly very famous ones) would be known to readers? Why italicize the changes rather than let people wonder?

Katie Rose Pipkin: It is nice to see someone approaching text generation and not going straight for Markov chains- I personally think processes like synonym swapping can be a lot more interesting, and certainly get less play. I think your project had a lot more capacity than what it ended up producing, however- the slowly mutating individual words didn’t really effect how I read the poems as structural whole, and I lost some interest after I realized what was happening. In truth, I simply don’t want to read the same poem 5 times, even if a few pieces have changed- perhaps increasing the number of words shifting over time could remedy this apathy. Still, good choice on process, and the moments where it shines are charming.

Kyuha Shim: Elegant approach. It would be interesting to see the progression of swapping synonyms shown on a single page. An increase in leading (line-height) would make those italicised words more eye-catching.

Golan Levin: I respect your investigation. There are many well-considered aspects to your process, with results that vary between fascinating, boring, and machinically error-ful. I wonder, however, about your choice to pursue progressive corruption versus an approach that would have showed numerous alternative versions (thesaurus-izing); it’s unfortunately dull to read the same thing so many times, with such minimal changes.

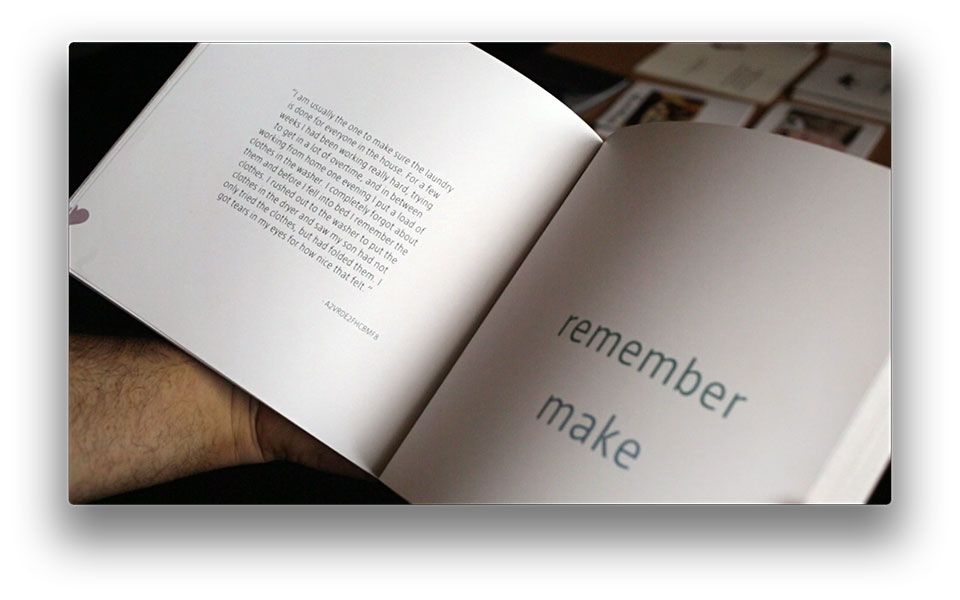

lumar

Nick Montfort: This project clearly involved a lot of effort and learning, although the texts that resulted are not extraordinary and the automatically scattered verbs impede, rather than enhancing, reading. It’s okay to have discovered that through experiment; it would be great to find a way to “illustrate” a text automatically like that, one that is really effective. The IDs to which each quote is attributed seem bizarre, maybe in a good way. See Nick Thurston’s Of the Subcontract, which is more pointed in documenting the time spent and money earned for each poem. This project seemed to have a lot of little components that were fairly separate; see if you can figure out a better overarching idea and framework the next time you take on something like this.

Katie Rose Pipkin: A very cute book, both in concept and design. Mechanical turk is a nightmare of a place, and I think there is interesting territory in producing tasks that are humanizing. Your binding is well-done, and I appreciated the care to the outside edge of the book. In a sort of preverse way, I think a similar strategy could be used for material that was less uplifting- mechanical turk tasks that criticize the structure of the labor being done there, or what it is like to seek employment in that way. I’d consider ways your existing strategies could be applied to critical works with other things to say.

Kyuha Shim: How you consider a book as a 3D object is very good! But you could improve the typography. Especially large texts appearing on the right spreads need major fixing. Color of the texts can be dictated by something other than its page number.

Golan Levin: Very interesting concept, strongly conceived process, and truly poignant results. I’m unexpectedly disappointed by the graphic design; the right-hand pages are weak.

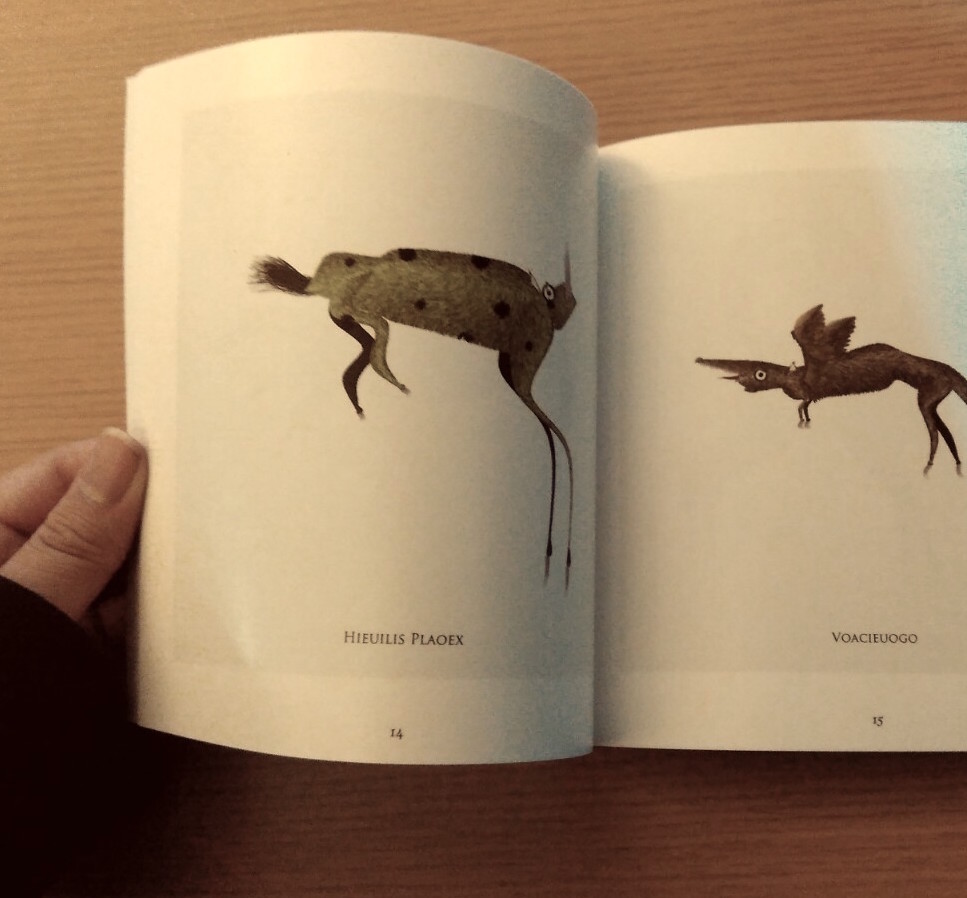

ngdon

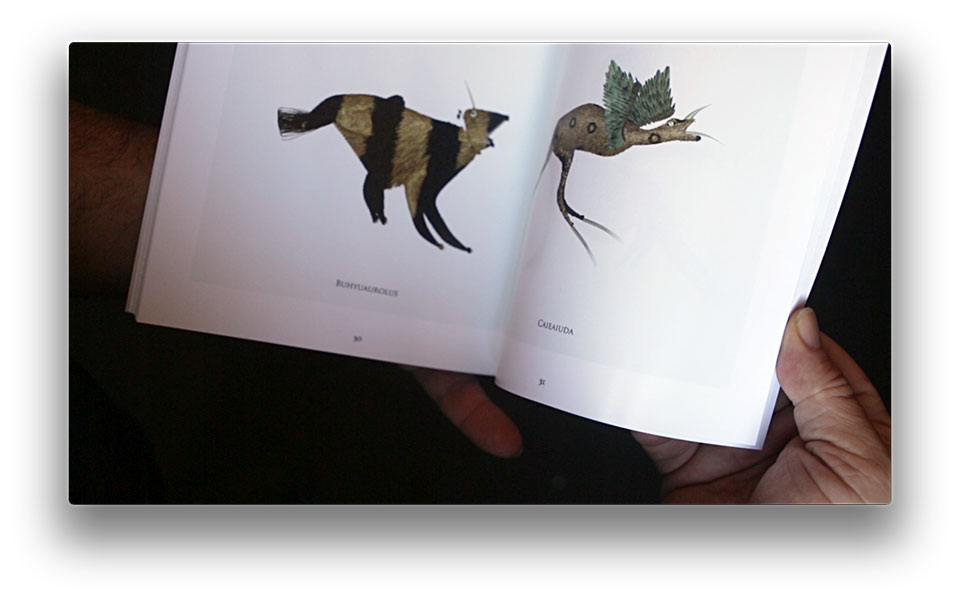

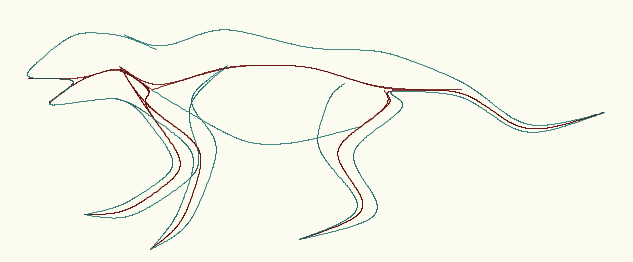

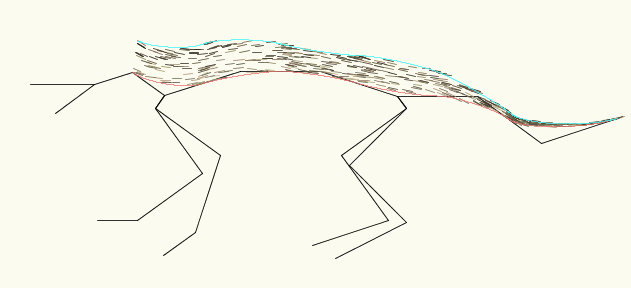

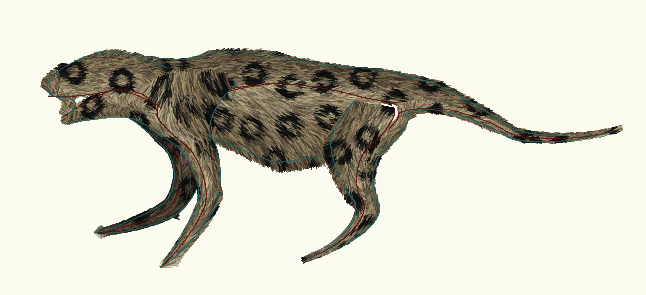

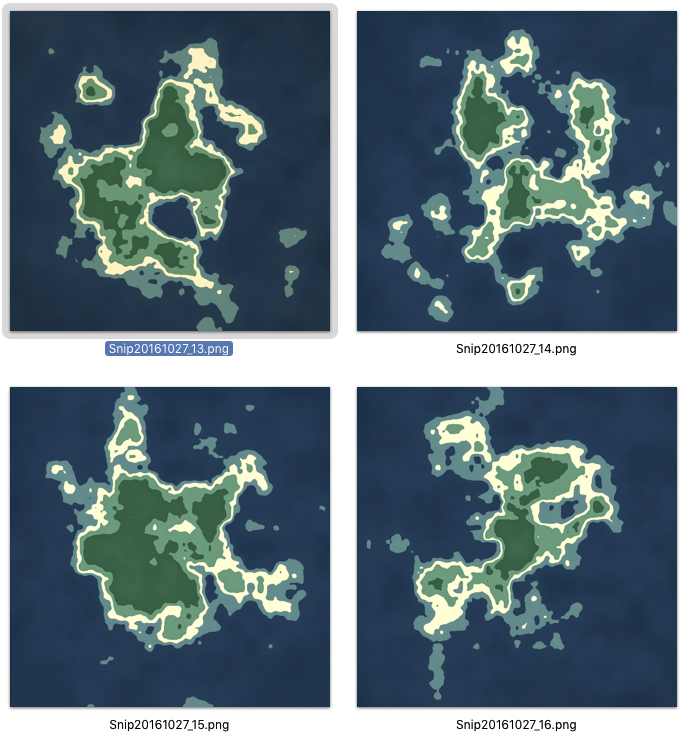

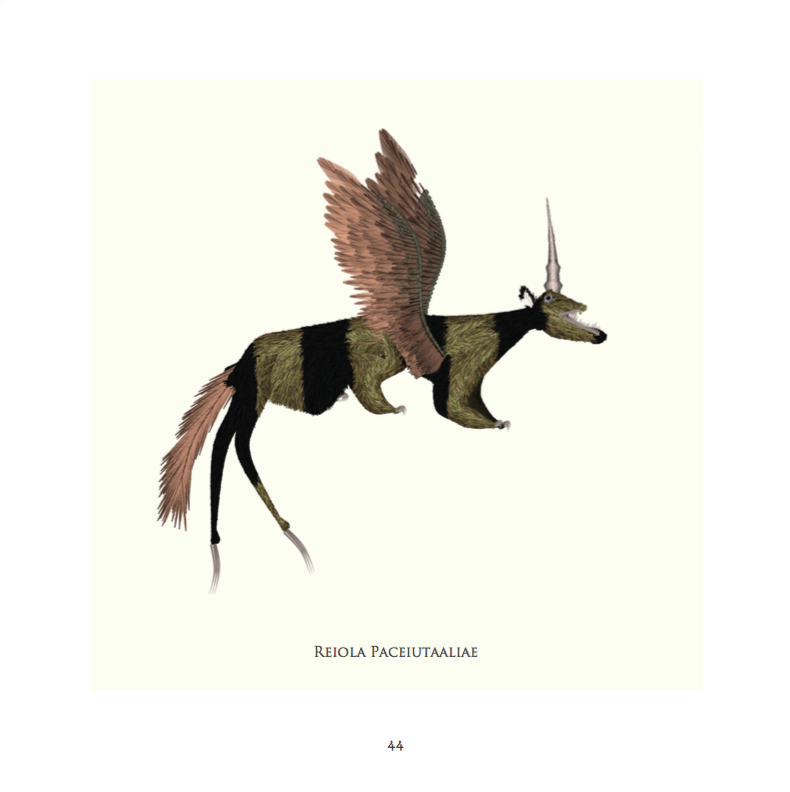

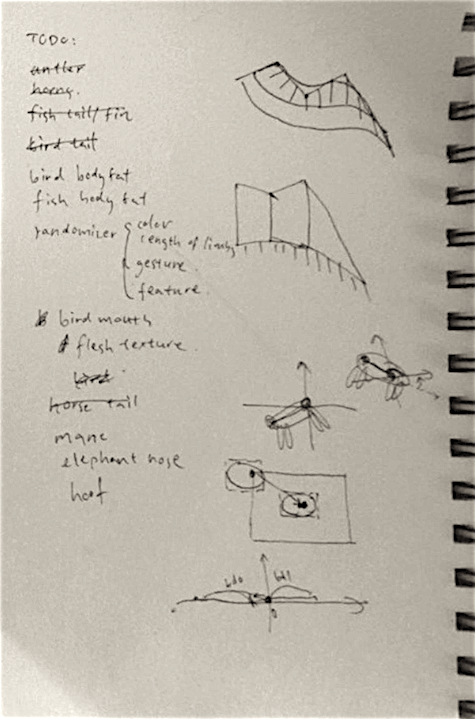

Nick Montfort: Very nice and a good concept, since mythical beasts (and also actual biological ones) exhibit combinations of other creatures’ attributes. The bestiary is an established literary form. Good selection of a high-level topic and good work in the generation of fauna. Nice connection to the classic Chinese book, too. You could more richly model what causes creatures to take different shapes, of course, and could auto-generate verbal descriptions of them rather than just names.

Katie Rose Pipkin: Congratulations: I’m impressed. This is a pretty remarkable project to execute in a week. If you are interested, I hope you continue to work on this generator, adding variation over time as you have it. You mentioned in your project description that you are considering continuing this idea into geography and plants, which I think could be quite compelling. I think you should look at Emily Short’s procedural work, particularily this one- https://drive.google.com/file/d/0B97d5C256qbrOHFwSUhsZE4tU0k/view. I’d also suggest submitting this to procjam (http://procjam.com/) if you’d like a more public response. I think it deserves it!

Kyuha Shim: Impressive work! Perhaps viewers would appreciate, if you include a brief description regarding the relations between island, animals and their names before or after p.2.

Golan Levin: Impressive work. Would be an interesting challenge to generate descriptions (biological or mythological) about the creatures. Unfortunately, he creatures’ eyes look ‘dead’ to me, and would be worth revising. (Also — nice use of Mitchell’s algorithm.)

takos

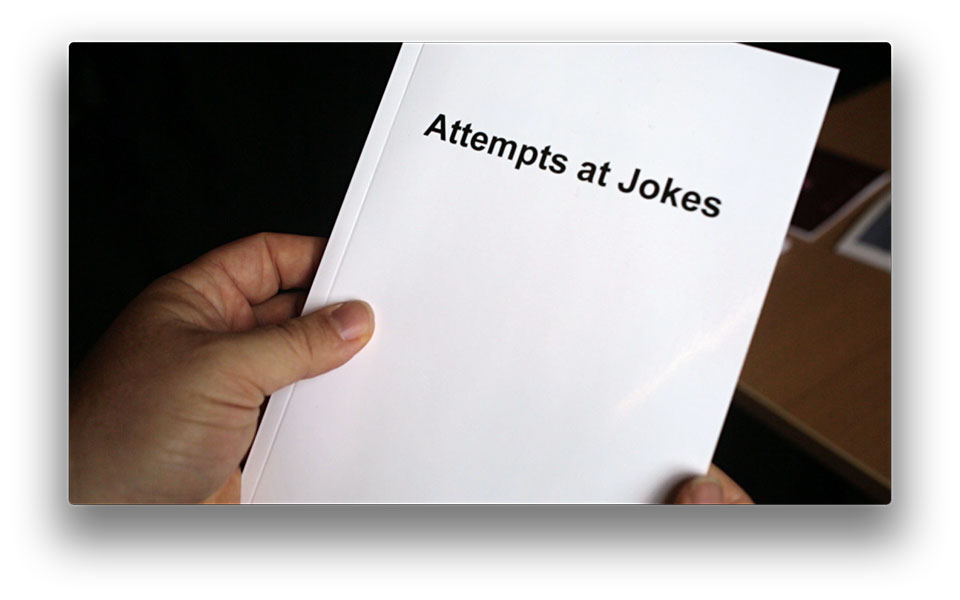

Nick Montfort: There is a good bit of academic work in humor and joke generation, recently by Tony Veale for instance. This attempt in this project is not generally a bad idea as it guarantees a sort of cohesion but allows the topic to change, maybe amusingly. However it is not carried out very well in this book — how it works in bot format is another question. Your book repeats the same tweets time after time, which isn’t necessary or a good idea. There is way too much reminding people of the Twitter origin of the text; why are there (fake? changing?) names attributed to each text, for instance? The note at the beginning is unnecessary. You could mention the origin of the statements at the end if you think you need to. Also, there is no title page to your book. There is a rich and well-known tradition of joke books that you could refer to, perhaps making a colorful cover as if your book were for kids, but your book just has a title in black on a white cover. If you want to take advantage of the book format, you could put the question on the right (recto) and have the reader find out the answer when turning the page.

Katie Rose Pipkin: Cute idea that fails to really work for me. I think you could use some simple rules to avoid repeition of the same tweet content, as well as recognizing when your ‘because’ is not followed by enough other text to form an answer. I know the book is called ‘attempts at jokes’ and not ‘jokes’, but to me it is failing structurally rather than because of the content. I’d revisit the rules by which you are gathering tweets, see where things fall down, and write some cases to circumnavigate them.

Kyuha Shim: Clear arrangements of all information. I can understand your reason of taking tweets upon ‘why did’ and ‘before’, but would appreciate it more if you incorporate a few other structures for jokes.

Golan Levin: Good job, though gem juxtapositions are rare. More work is needed to improve the ‘quality’ of the non-jokes, while I certainly appreciate that it’s difficult to do so. The layout leaves something to be desired.

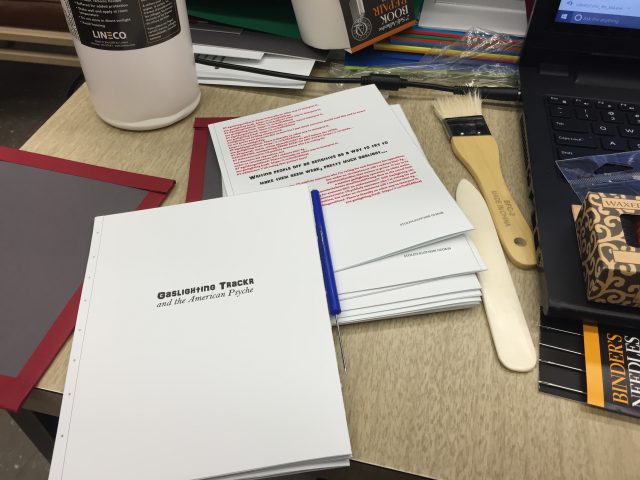

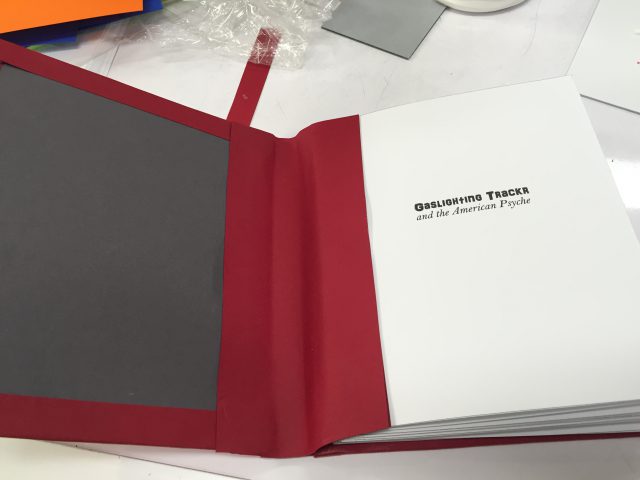

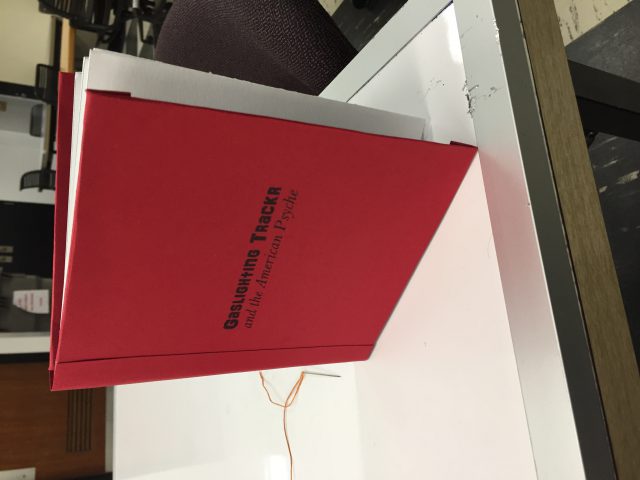

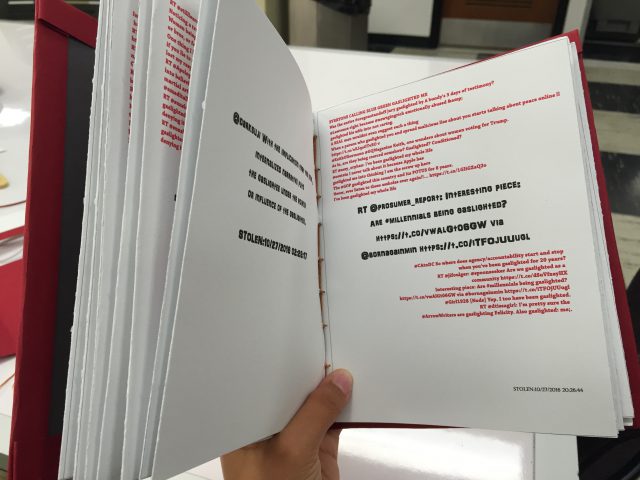

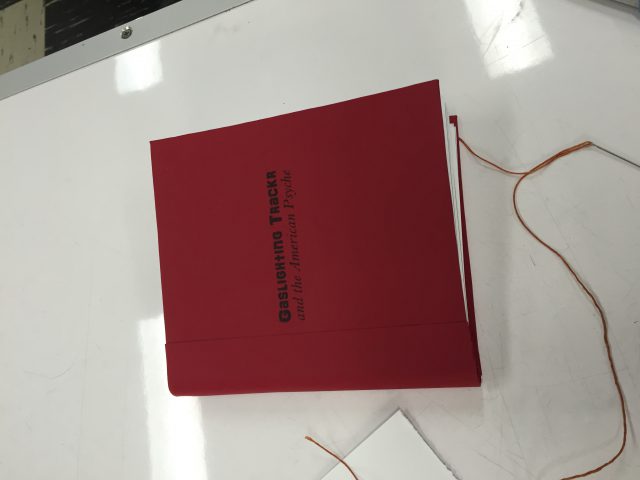

tigop

Nick Montfort: A simple example of grep poetry/Twitter search but presented visually very well in terms of the page layout, and with a nice binding, too. This term matches an interesting collection of texts, too, and the result is interesting to read. I’m not sure it manages “to unveil what the Twitter population’s thoughts on gaslighting were” because these tweets are taken out of context — they might be replies, captions to photos, quoted retweets, and so on. But without being documentary they are still worthwhile.

Katie Rose Pipkin: First off- your binding is beautiful, and the physical object deserves some real recognition. I wish I felt the content was as solid as its cover. I’m glad you pursued something that could be considered political, but I feel it gains little from being pulled off twitter- the same tweets and material are available to me in the search bar, and you’ve offered no particular stance or additional weight for your part. Perhaps even doing some simple scrubbing of text- removing links we can’t follow and handles we can’t click- would help recontextualize it in book format, and let it be about the word and concept and not just twitter.

Kyuha Shim: It’s not clear what was the intention of taking ‘gaslighting’ as a keyword. Typography could be improved.

Golan Levin: Acceptable work, and nicely bound, though a late submission. Strikes me as lacking a strong enough point of view, and needs editing, or more ideas than a mere search for a term.

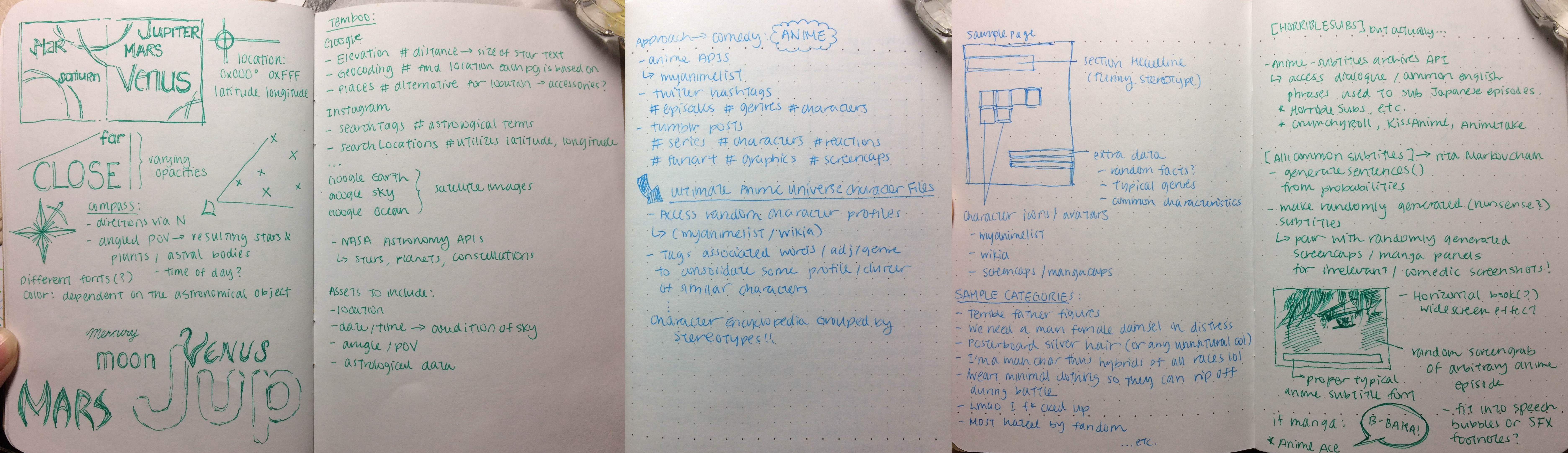

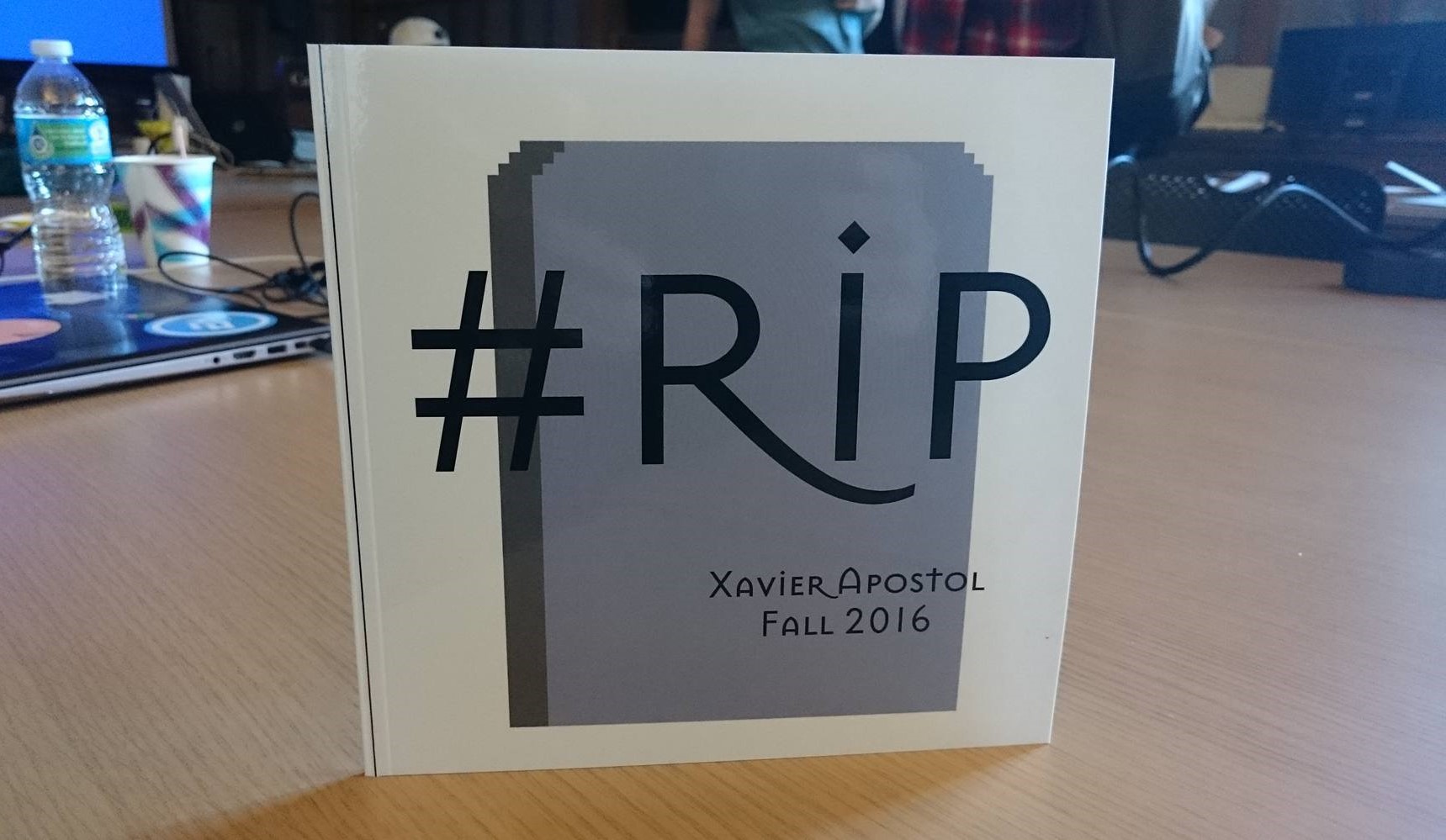

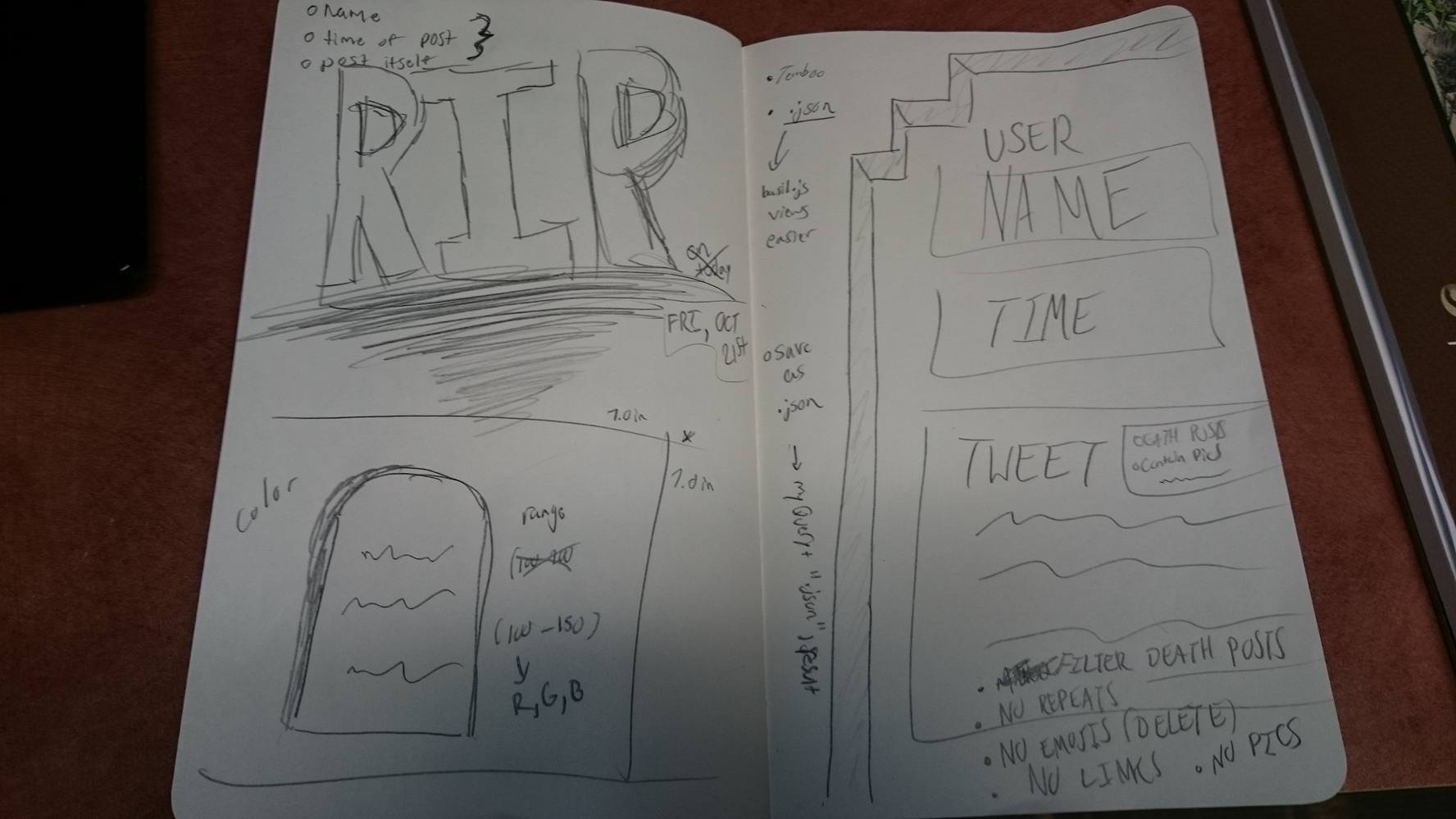

xastol

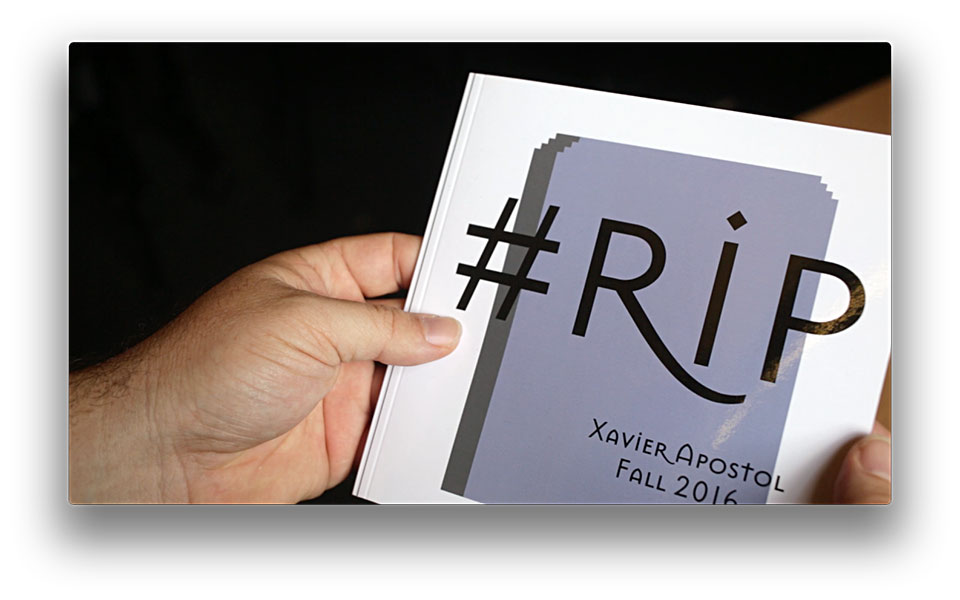

Nick Montfort: The layout here is generated, so I suppose this small book is applicable, but it’s almost as if these were 24 random tweets. I learn almost nothing about how people use the #RIP hashtag that I hadn’t guessed. Nothing about people in general, language, or Twitter. Why October 21, 2016, other than an impending due date? I appreciate the connection between the pages images and the concept, but much could have been done to make the book readable, interesting, and provocative.

Katie Rose Pipkin: I’m afraid I liked the concept more than the actual result; this is a sad but common side-effect with generative projects, where sometimes the one-liner description is more compelling than the material that comes of it. In the book’s best moments, there was humor; in its worst (which was for me, the cut-off tweets with links not much else) I felt like I was looking at noise. Still, congrats on getting it printed, with cute procedural graphics no less- my advice for the future is to perhaps keep thinking of rules that help you to cut out content that is not interesting to compelling to your idea, and to grab that which is.

Kyuha Shim: Nice choice for the typeface! It could be more interesting if there were variations of tombstones.

Golan Levin: A bit facile, and not as memorable as it could be. There’s lots of ‘junk’ in there; I feel like the challenge in a project like this is to create a computational “editor/curator” with a strong voice, and it’s not there. As I discussed with you previously, I feel like you missed an opportunity to use the assignment to address your core interests, e.g. film — why not generate a screenplay, etc? This project is competently done, but also feels like you’re not as invested in it as you could be.

zarard

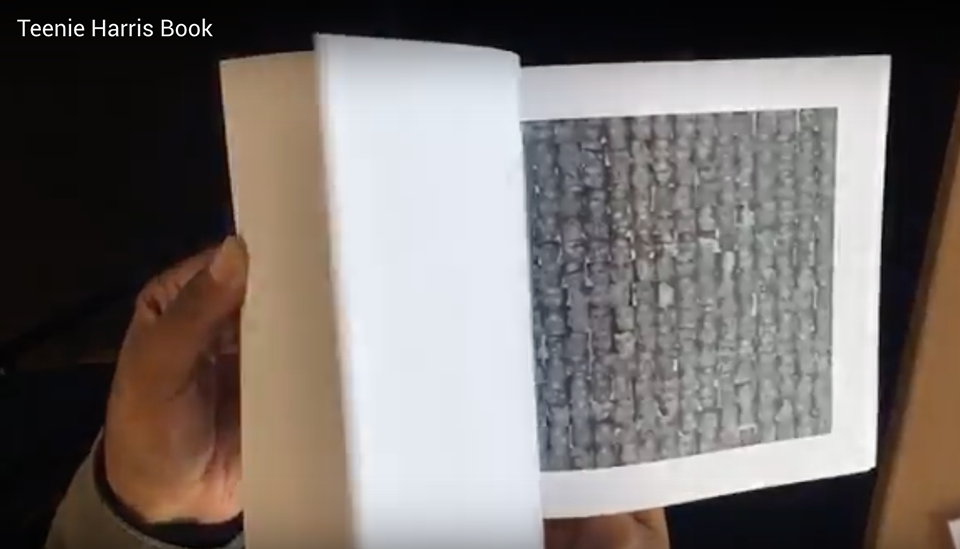

Nick Montfort: This is a nice use of computation to engage with a photographer’s work and give a different sort of perspective on it. As noted, the information at hand is about the photos rather than the people or how they looked to anyone other than this camera. I think sorting the face details by brightness may not have been the best approach, because this method itself suggests that “color” (brightness/darkness) is the primary axis for organizing people. It would be interesting (if much harder) to organize the faces based on where they were looking, for instance. Still, lots to think about and look for in this project.

Katie Rose Pipkin: I commend you for working with an interesting dataset, one that has its own inherent strengths. Many others seemed to be fighting to make twitter or flickr feel personal, or meaningful. It is wise to use source material that has its own unique voice already, and congratulations for seeing that. The work you’ve done on top of the quality of material you’ve brought to the table seems fairly minimal, but it has a strong argument to it. Keep it up!

Kyuha Shim: The description includes only self-evaluation and execution methods. What is the project objective and concept? What is demonstrated, apart from sorting portraits?

Golan Levin: An extremely interesting investigation — though, impaired by a somewhat slack execution. For example, I found it necessary to add (to your blog post) information about who Teenie Harris was, so that a typical reader (and potentially — the other reviewers) would be able to understand what they were looking at. Also: The digital and printed objects suffer from low resolution and very poor print quality — e.g. I happen to know that you could have extracted the faces from higher-resolution versions of the images, and the Espresso book machine does not reproduce the images well. I also really don’t understand your peculiar and almost disrespectful choice of font (Cooper? — Really? The Diff’rent Strokes sitcom font? The Tootsie roll font?). Also, your documentation does not contain your code, as requested in the assignment statement. In sum, this is a fine project, but I wish there were some more ‘care’ taken to make a high-quality presentation.

Robot sees the world through rose tinted receivers and transmits the waveforms of the words “I love you.”

Robot sees the world through rose tinted receivers and transmits the waveforms of the words “I love you.”

Things to observe and learn when you start becoming a type nerd

Things to observe and learn when you start becoming a type nerd

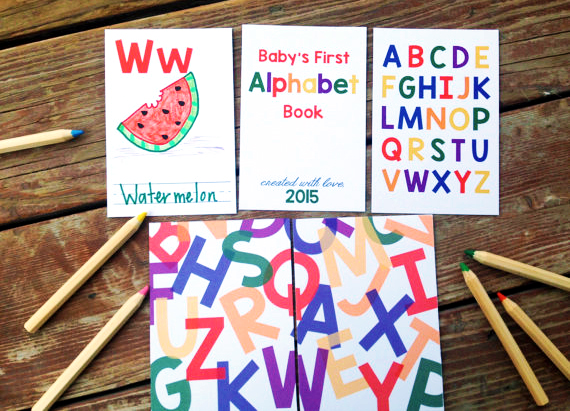

Baby Alphabet Book

Baby Alphabet Book

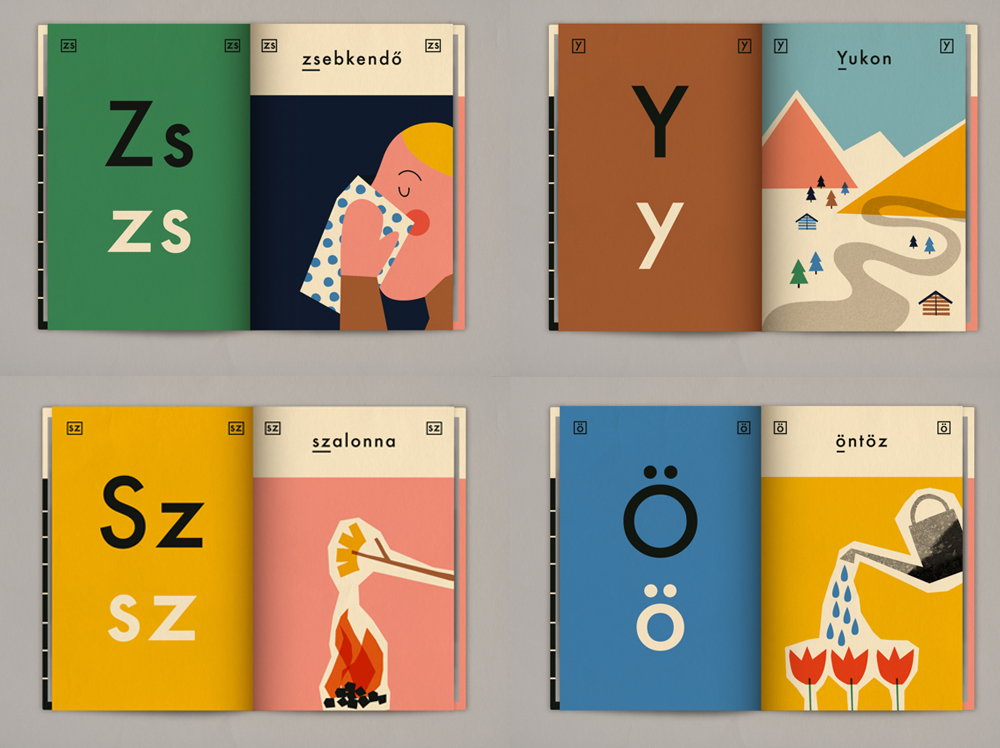

Anna Kovecses’s Hungarian Alphabet Book

Anna Kovecses’s Hungarian Alphabet Book

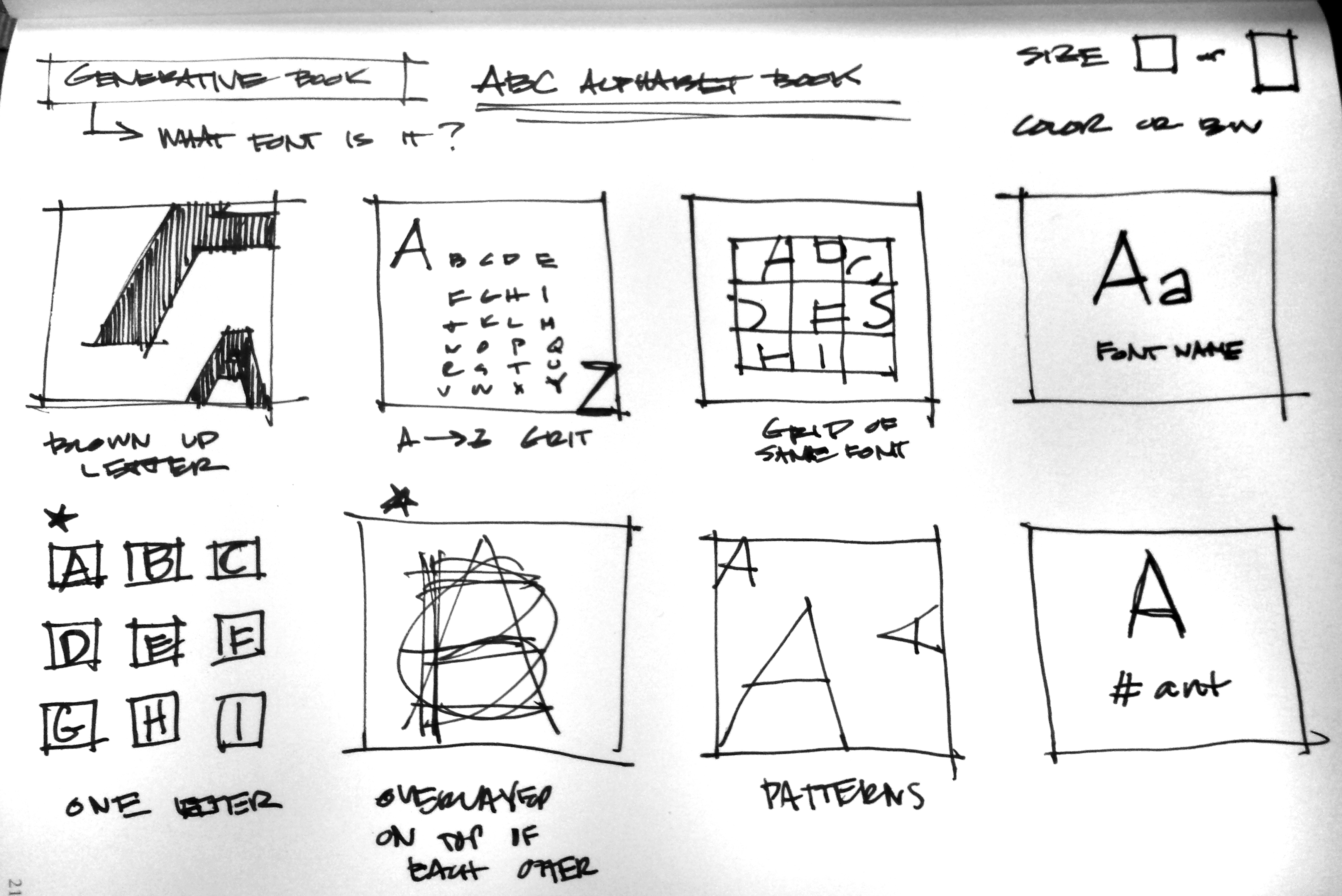

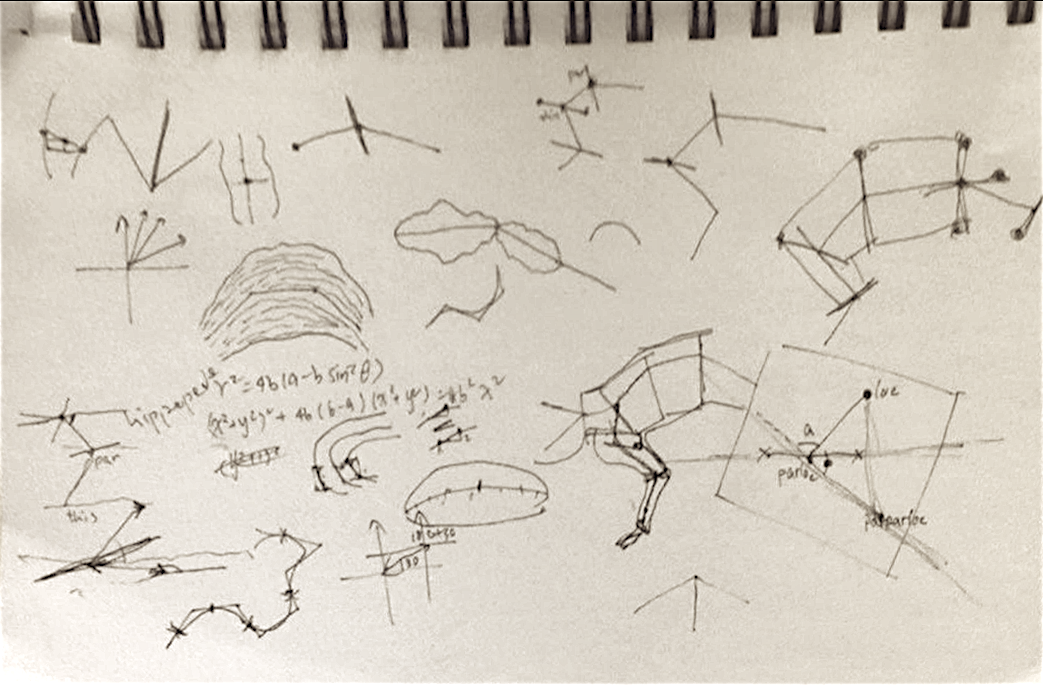

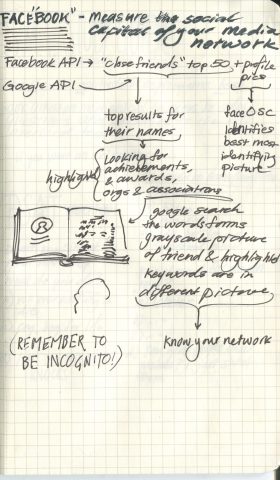

Initial Ideas for an ABC book

Initial Ideas for an ABC book

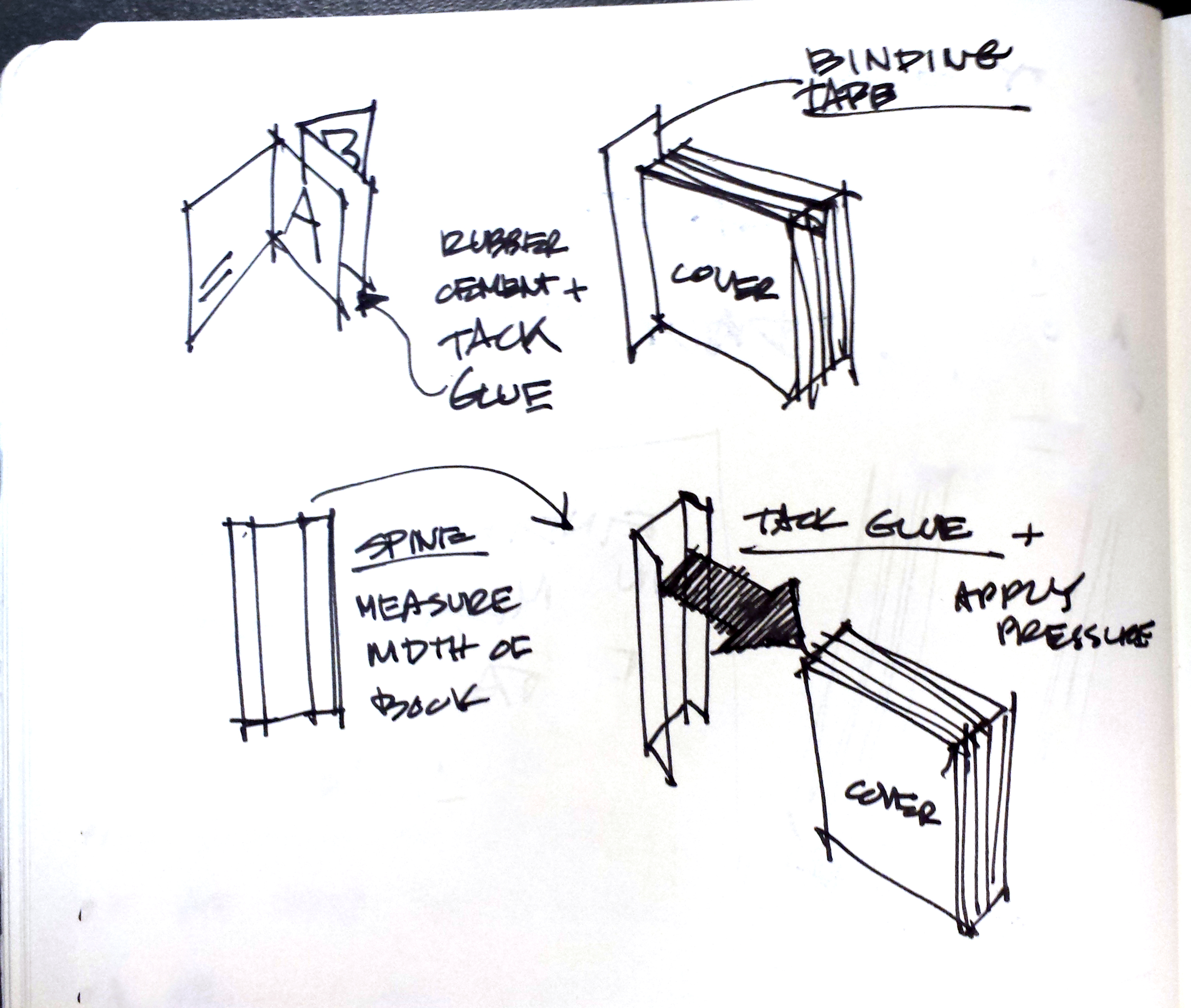

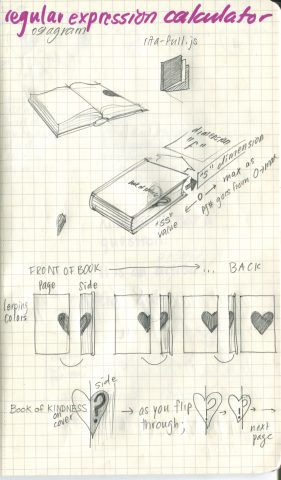

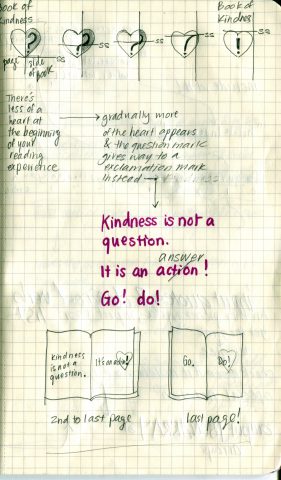

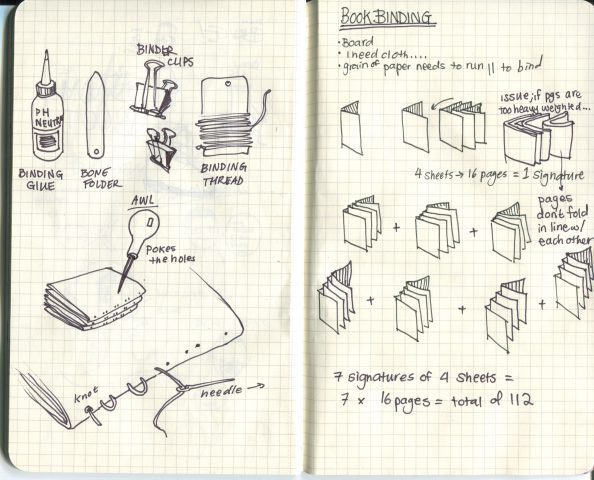

Hand Binding Notes

Hand Binding Notes

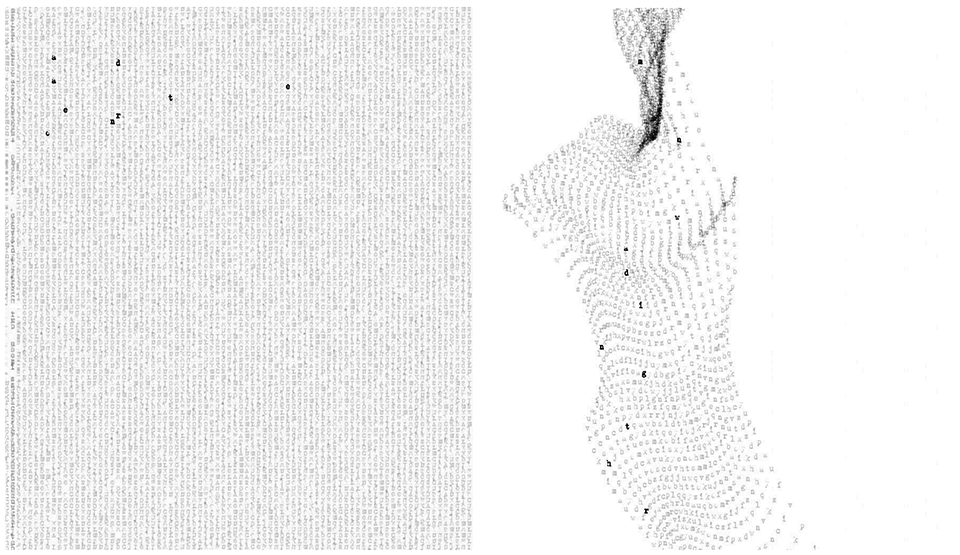

Experimenting with the appearance, opacity, and number of letters

Experimenting with the appearance, opacity, and number of letters

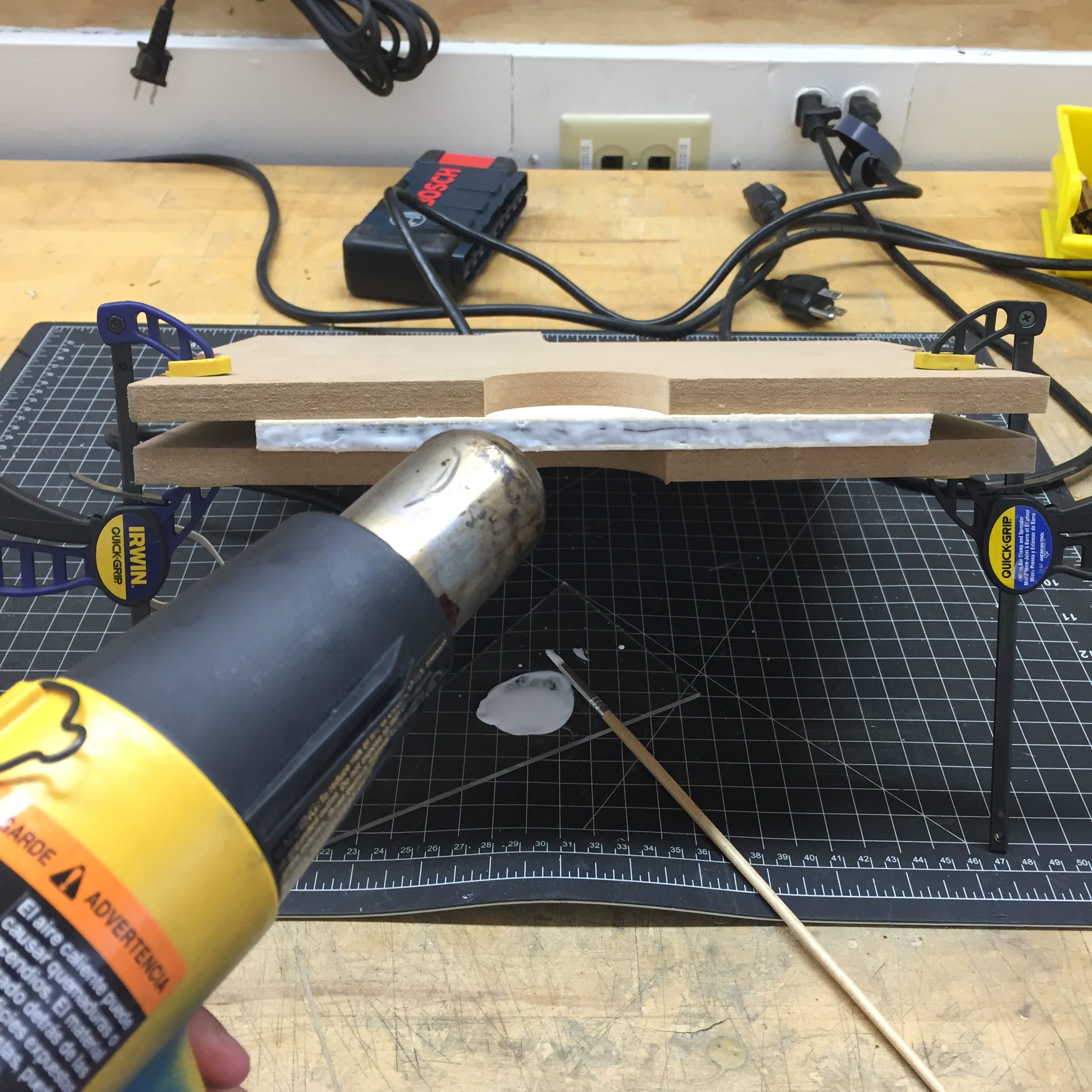

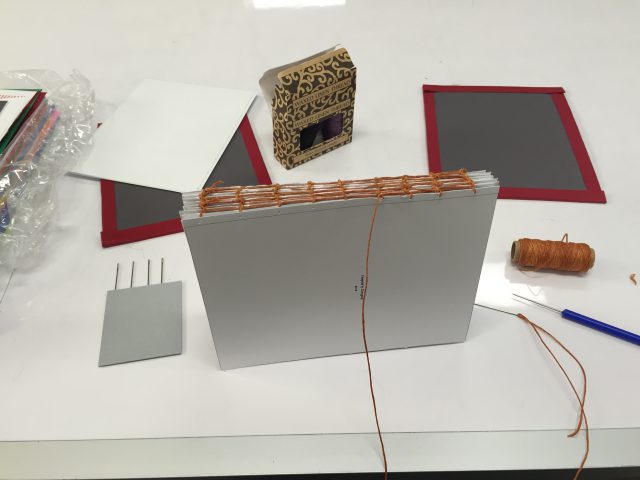

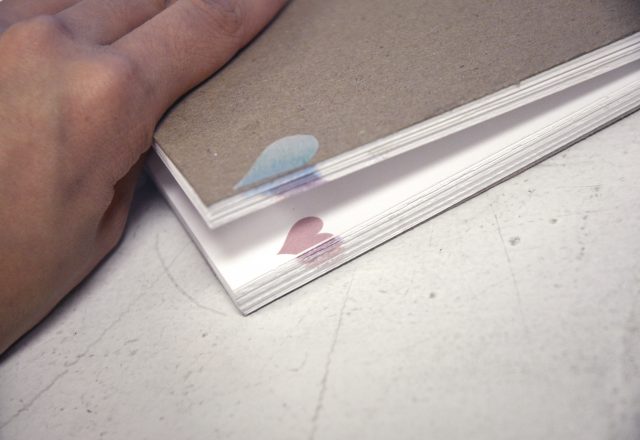

Hand Binding in Progress

Hand Binding in Progress

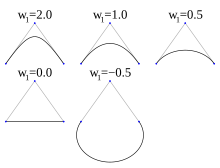

Rational Bezier Curve (From Wikipedia)

Rational Bezier Curve (From Wikipedia)

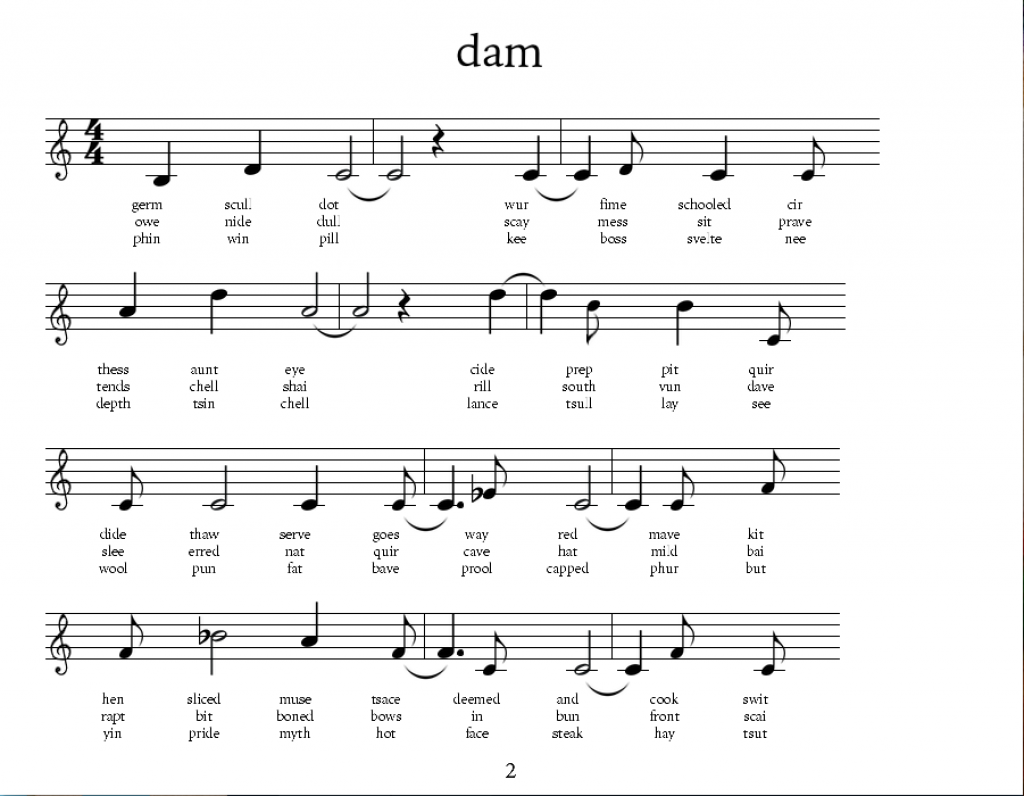

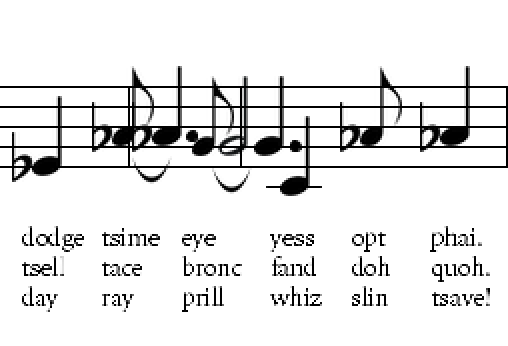

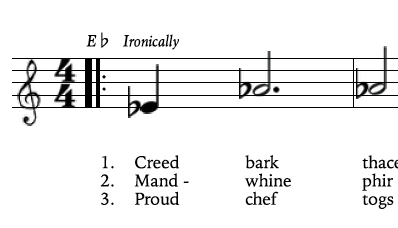

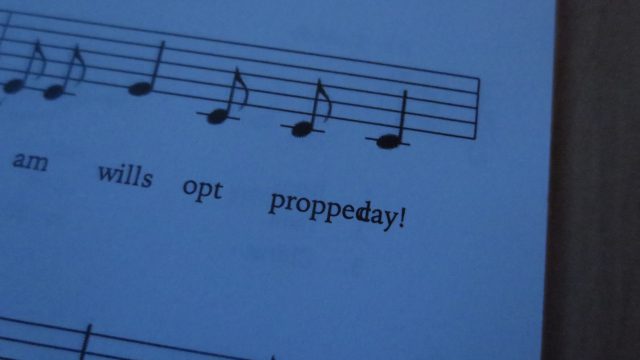

An early rough-draft of a verses page.

An early rough-draft of a verses page.

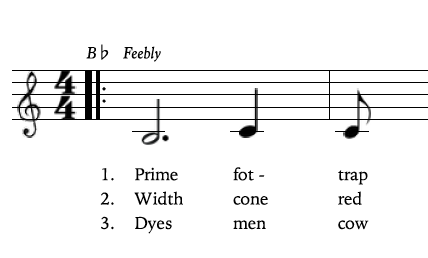

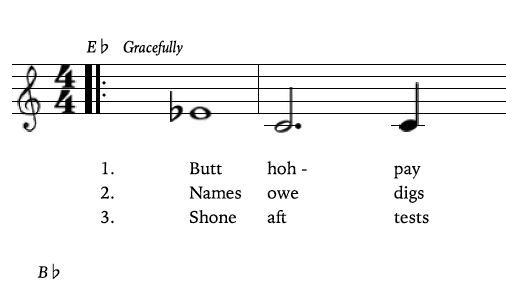

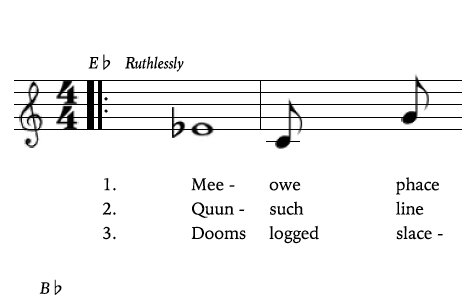

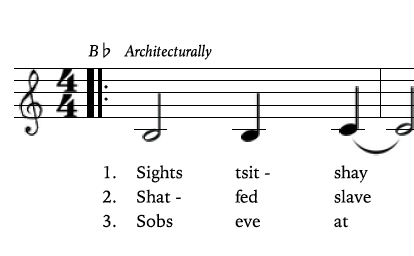

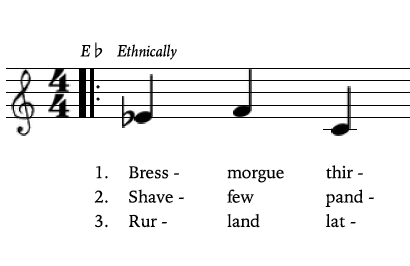

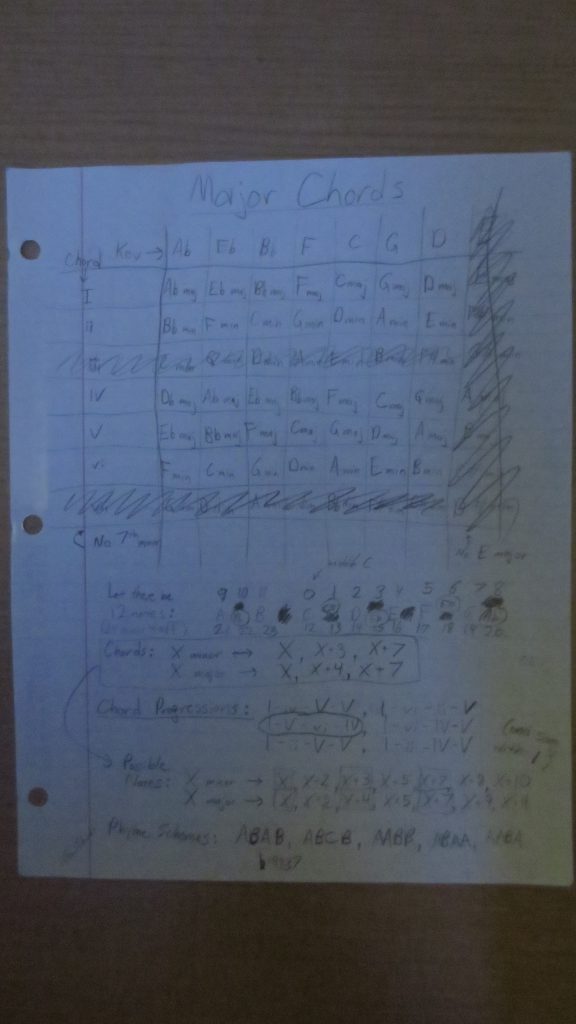

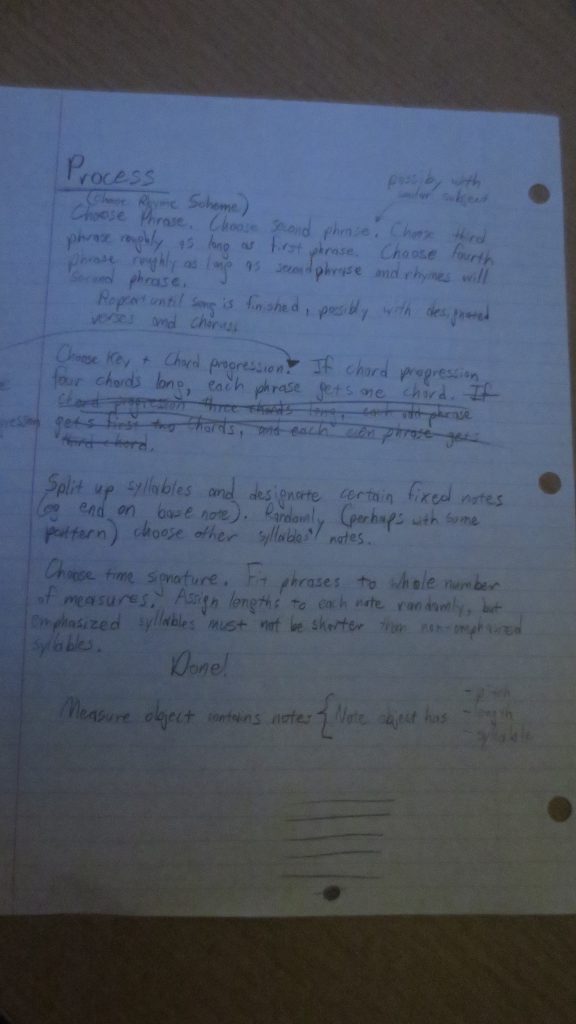

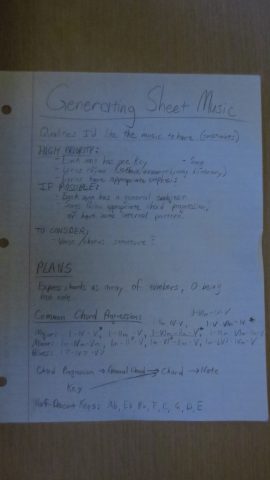

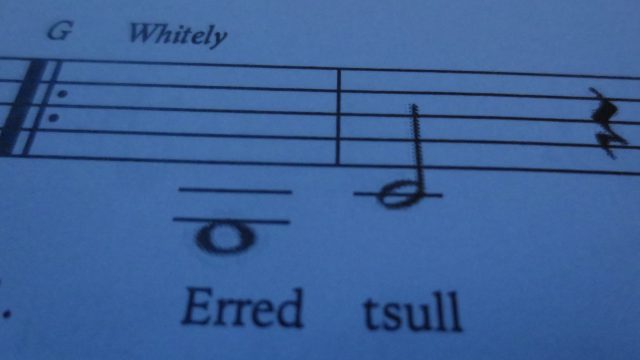

Some squished notes in early iterations of “Genesong”

Some squished notes in early iterations of “Genesong”

7 different pdfs for the 7 different signatures needed

7 different pdfs for the 7 different signatures needed