My plan for my capstone project is to construct a working, tangible version of the interactive novel concept I prototyped for my Interactivity Project. That said, my sketch looks an awful like (read: the same) as the sketches I posted in my Project 3 deliverable post. For an overview of my intentions, please visit the IMISPHYX IV project page, and for a continually updating list of prior art that’s inspired me, try this link.

I’ve iterated slightly on my goals for the project, based upon feedback from the class and also on my own daydreams, of which I tend to have a ton. Moving forward, I hope to accomplish the following things:

1. implement the reactivision prototype.

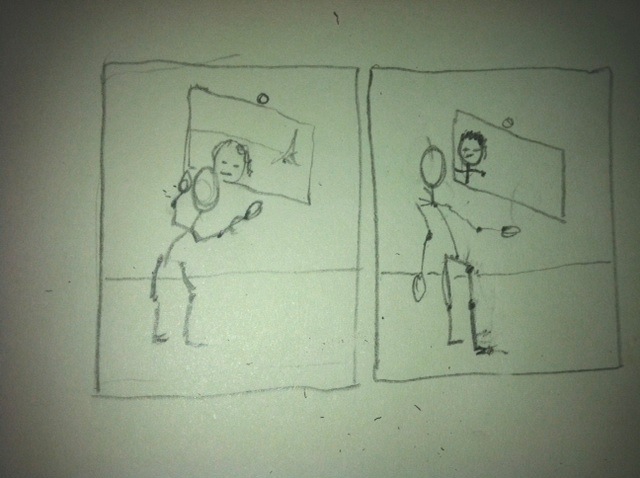

2. ditch the objects/props concept from the last iteration, and focus instead on illuminating the contrast that exists between the dialogue that occurs among characters and thoughts characters hold within them.

3. really push the way I display text upon the table to make it as engaging as possible.

I’m also still torn between using my own personal story, Imisphyx, for this project, or proceeding with a story that has already been told, and thereby at least allow people to compare the interactive version to the original, static version. This would also eliminate my need to worry about perfecting the story at the same time I’m perfecting my interaction — although it *is* arguable that evolving both story and presentation simultaneously is the best way to go.

If I were to shy away from using ‘Imisphyx’, I would like to revisit the Alfred Bester novel I was toying with in my original Project 3 sketch. What’s nice about The Demolished Man is that it deals heavily with the exact themes I’m trying to explore in my interactive piece : the tension between what’s happening inside somebody’s head and what they’re actually saying out loud. I think this story could allow me to play with interesting visualizations of text in the characters’ ‘first person’, ‘internal’ mode.

For example, below is a passage from the book where the murderer, Ben Reich, is trying to get a very annoying song stuck in his head, so that the telepathic cop, Linc Powell, can’t pry beyond it into Reich’s mind to discover his guilt.

A tune of utter monotony filled the room with agonizing, unforgettable banality. It was the quintessence of every melodic cliche Reich had ever heard. No matter what melody you tried to remember, it invariably led down the path of familiarity to “Tenser, Said The Tensor.” Then Duffy began to sing:

Eight, sir; seven, sir;

Six, sir; five, sir;

Four, sir; three, sir;

Two, sir; one!

Tenser, said the Tensor.

Tenser, said the Tensor.

Tension, apprehension,

And dissension have begun.

“Oh my God!” Reich exclaimed.

“I’ve got some real gone tricks in that tune,” Duffy said, still playing. “Notice the beat after `one’? That’s a semicadence. Then you get another beat after `begun.’ That turns the end of the song into a semicadence, too, so you can’t ever end it. The beat keeps you running in circles, like: Tension, apprehension, and dissension have begun. RIFF. Tension, apprehension, and dissension have begun. RIFF. Tension, appre—”

“You little devil!” Reich started to his feet, pounding his palms on his ears. “I’m accursed. How long is this affliction going to last?”

“Not more than a month.”

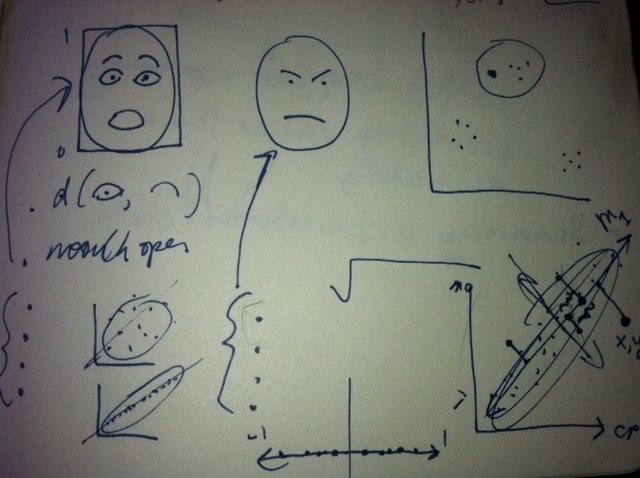

The description Bester provides of the nature of the song, the patterns it possesses, and it’s cyclical nature, lends itself to some really awesome interactive portrayals. On the table, one could envision Reich’s character object set to ‘internal’ mode, and suddenly emitting these endless spirals of the annoying, mindworm tune. Perhaps, every time Linc tries to pry into his head, his thoughts are physically deflected on the screen by the facade of Reich’s textual whirlpool. See below: