Lumar-MoCap

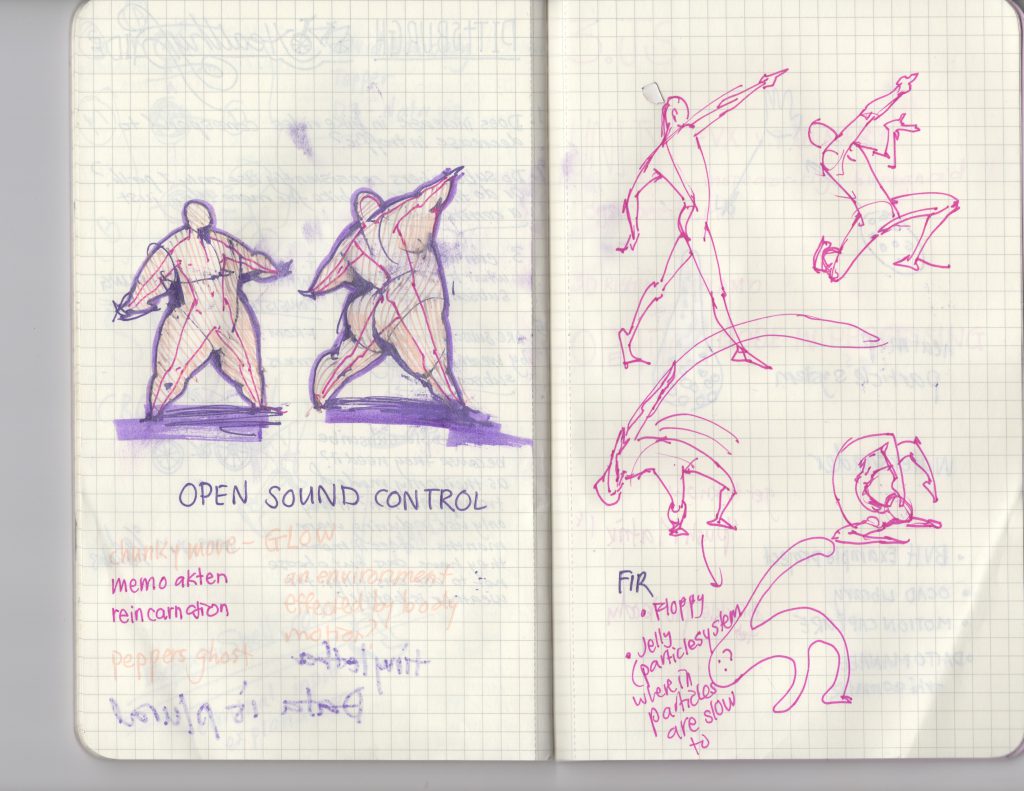

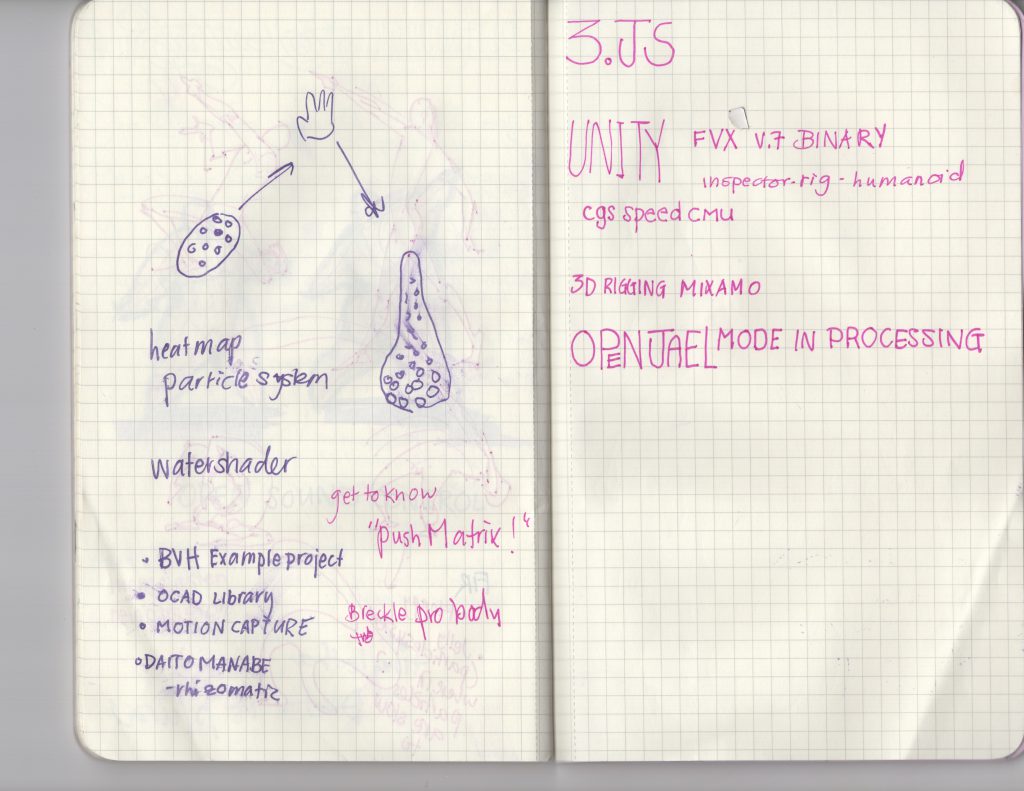

I had wanted to create a program to find out the kinetic energy used at each joint (not to be confused with velocity because kinetic energy depends on acceleration and mass as well). It’s a foundation that is incredibly scalable. I had spent the good chunk of the week trying to work out 3.js and brainstorming ideas….actually I spent a little too much time on that. By the time I did give up on getting my 3.js up and running, it was crunch time for crash coursing 3d in processing.

Based off kinetic energy, there could’ve been a particle system that reacted to higher levels, wherein greater motion will generate more. Or rather an environment – tiny particles at each unit of the 3d grid where the gravitational pull of a part of the body is increased according to its change in kinetic energy. I thought that would be the most interesting because the person would be ‘there’ in the environment by…’not’ being there. The negative space within the environment saturated with floating particles would indicate the human form, but would be more dynamic and better understood while in motion because the particles would gravitate towards it.

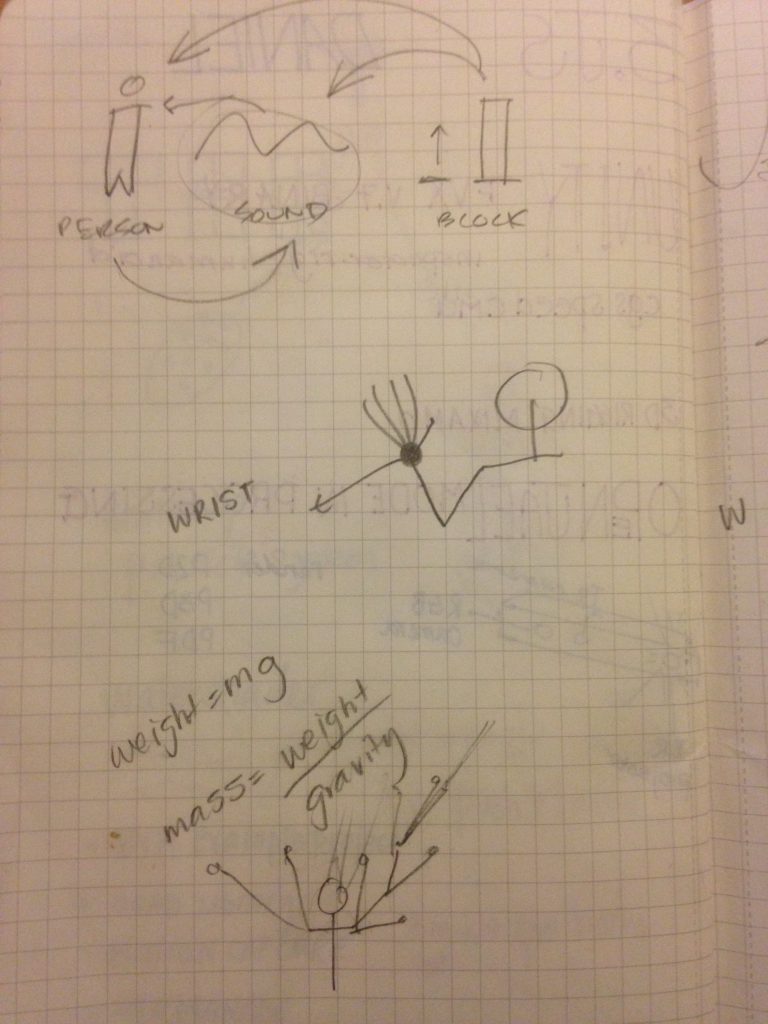

On a interaction standpoint, with this hooked up to real time data, people with larger mass will comparatively have larger gravitational pulls if they move at the same speed as someone of smaller stature. With multiple people, there is an interesting competition between who’s kinetic energy draws upon more of the floating ‘ star’ particles within the void.

etc, etc, etc, idea after idea, different modes for different ‘planets’ (planetary pull effects weight), comparing motion, calculating joules from kinetic energy and based of weight and mass assumptions – visualize calorie consumption, etc etc

etc, etc

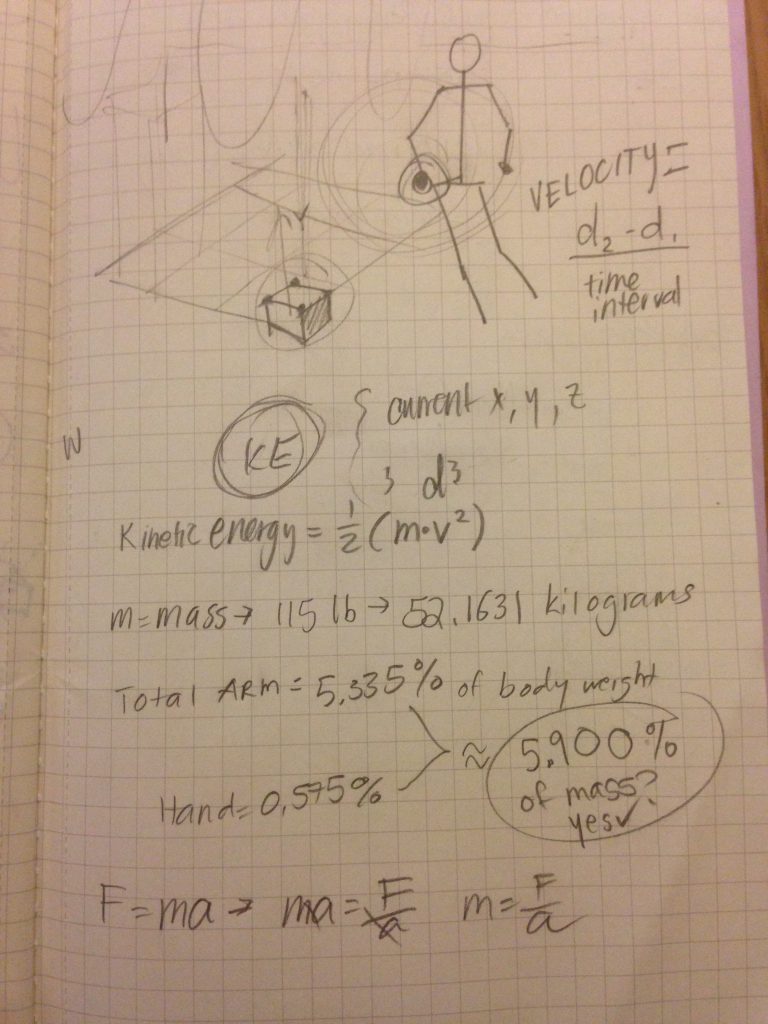

But having lots of ideas is sometimes a detriment – there is too much to know where to focus on. So I started off with just calculating KE.

In impromptu collaboration with the fabulous Guodu:

The idea we came up with had a focus on the interaction design. We built the foundation first on being able to calculate the kinetic energy each

Originally, we wanted the kinetic energy of each limb to be expressed by an iridescent bar chart. The individual bars would represent different parts of the body, and change colors and height according to the limb’s kinetic energy (the limb would as well, so there would be some sense of what represents what).

The bars going up and down would look like the wave formations of audio files. While it wouldn’t have matched specifically, we were really looking forward to manipulating amplitude and frequency of sound waves in processing according to kinetic energy. This way, anyone could dance ‘in sync’ with the music. Never worry about missing a beat when the music is generated according to your dance!

We had some trouble with transformations (translation/orientation) in 3d space. Great learning experience together! Neither of us have ever touched 3d programming/3d in general before this assignment so I’m happy that we have had the chance to crash course through it.

Here we put BVH files of ourselves dancing:

THE BLOG STOPPED TAKING MEDIA UPLOADS. ERROR ERROR ERROR – THE REST OF THIS IS AVAILABLE ELSEWHERE –

Did you notice it was smoother? We changed our formula to make the reactions of the balls smoother. Since we were only smoothing distance to a running average, and kinetic energy is velocity squared, the easing had little to no effect. We decided that instead of expressing kinetic energy, we would just visualize velocity instead.

Overall, I can’t say I am too proud of how the end result translated the movement visually. But I am happy to have had this opportunity as my first exposure to 3D programming/animation/anything.

For documentation purposes, I will try to get another BVH file rendered of someone moving their body parts one by one to show how the program works, before recording a live video of someone interacting with it and the computational results in sound and visuals being shown.

I’m excited to use the velocity data to inform the physics of a particle/cloud system.

The code for now: